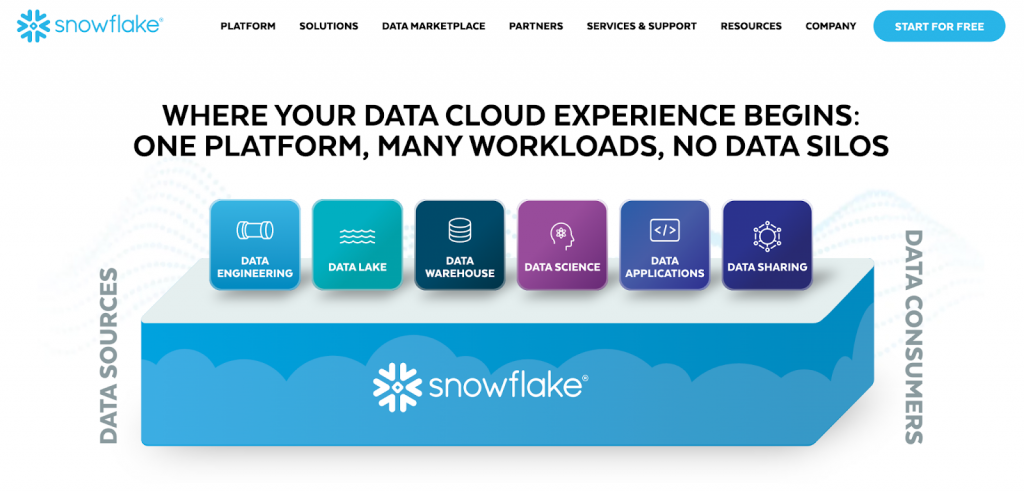

Snowflake is a cloud-based data warehouse that uses innovative approaches to managing big data. It’s often used for data consolidation, BI and analytics, data sharing, and machine learning. To maximize these features’ effect, adding Snowflake ETL tools to your data stack would be a plus. Such solutions bring data from disparate sources into a data warehouse and establish connections between the data ecosystem elements, like neural connections in the brain.

This article explores several reputable Snowflake ETL solutions and discusses their business benefits. It also provides tips on selecting the best solution for your particular needs.

Table of Сontents

- Understanding ETL for Snowflake

- Benefits of Using Third-Party Snowflake ETL Tools

- 4 Things to Consider When Selecting ETL Tools for Snowflake

- Top 10 Snowflake ETL tools

- How to Import Data into Snowflake Using Skyvia in Minutes?

- Conclusion

- FAQ

Understanding ETL for Snowflake

What Is ETL?

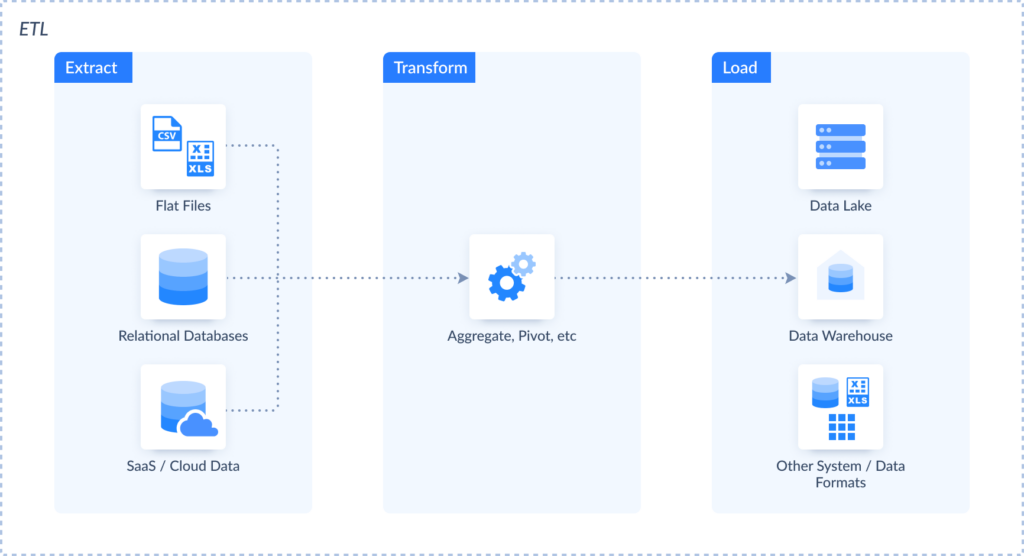

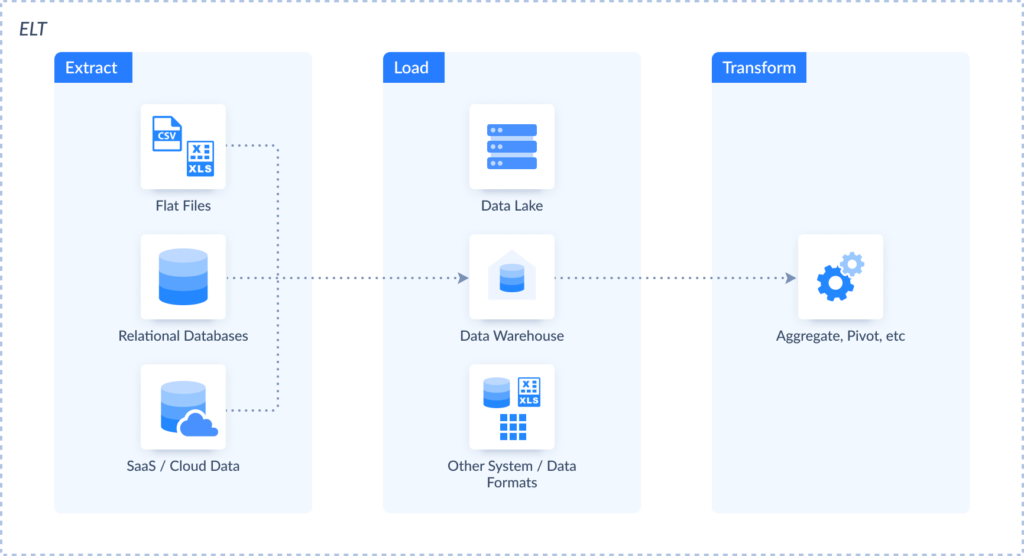

ETL is the abbreviation for the ‘Extract, Transform, Load’ approach for dealing with data.

- The Extract stage involves the collection of data from multiple sources, from legacy systems to modern SaaS applications.

- The Transform step applies data transformation techniques, basic or advanced, depending on the current requirements.

- The Load step transfers data to the indicated destination, usually a database or a data warehouse.

You must create Snowflake ETL pipelines to move data from dispersed sources (flat files, databases, SaaS apps, etc.) into this data warehouse.

What Is ELT?

In certain configurations, data loading takes place before the transformation stage. This approach is known as ELT (Extract, Load, Transform), and it’s also used with Snowflake. With ELT, data is transformed and processed on the data warehouse side using dbt, for instance, which makes this approach faster and more adapted to the current data volumes.

Here are the main differences between ELT and ETL.

| ETL | ELT | |

|---|---|---|

| Destinations | ELT is mainly used to load data into a cloud data warehouse or a data lake. | ETL doesn’t impose limitations on the destination – data can be loaded into any supported source. |

| Data types | ELT works with structured, semi-structured, and unstructured data. | ETL supports structured data. |

| Transformations | Transformations on the destination side usually require programming skills. | Transformations are made after data extraction and before its loading to the destination. |

Note: Apart from ETL and ELT approaches, Reverse ETL is also often used. It allows you to move data from Snowflake to other business apps for data activation.

Why to Use ETL for Snowflake?

It’s difficult to form a general idea of the company’s performance when all the organizational data is scattered across different systems. So, it’s essential to consolidate it in Snowflake to obtain a unified view of business health. Both ELT or ETL approaches can help with this since they automate data collection, modify data along its transfer, and optimize its loading into Snowflake.

There are other good reasons to use ETL for Snowflake:

- Apply multistage transformations on data intended for analytical purposes.

- Employ elasticity, scalability, and flexibility for changing data loads.

- Configure ETL pipelines and automated workflows in a visual interface.

- Enjoy optimized cost and performance for big data cases.

- Take advantage of real-time or near-real-time data processing.

- Obtain a centralized repository with no-code data movement.

- Extracting insights with the data blending possibilities.

- Ensure data security and privacy on transfer and storage.

Native ETL Capabilities of Snowflake

The founders of Snowflake also acknowledge the significance of ETL processes, so they offer built-in data integration options directly inside this data warehouse. You can use them to build and set up data pipelines.

- Snowpipe. This tool is designed for real-time data ingestion and loading with low latency.

- Streams and Tasks. This solution implements the Change Data Capture (CDC) technology and automated SQL execution, allowing you to monitor and react to data modifications effectively.

- Zero-Copy Cloning. This tool creates data clones without duplicates. It can be used for testing and data manipulation without the extra overhead of data replication.

- Stored Procedures. This option executes logic for data manipulations directly in Snowflake.

Note that constructing data pipelines with these features is a complex procedure. It involves a modular approach, where creating integration dataflows will be like a LEGO puzzle. This will take much time and effort and require regular maintenance. Meanwhile, third-party data integration tools deprive you of such complications, allowing you to set up everything in several clicks. It’s possible to get some of these tools from Snowflake Marketplace.

Benefits of Using Third-Party Snowflake ETL Tools

Unlike the options mentioned above, the solutions by external providers don’t limit you to using only Snowflake. They support multiple data sources and destinations, allowing you to build data pipelines of different configurations. Such solutions also provide a range of additional features that simplify the data integration process.

Pre-built Connectors

Data integration services come with pre-built connectors that allow users to connect to hundreds of data sources (apps, databases, flat files, etc.). This streamlines the integration process since non-tech business users (marketers, salespeople, etc.) can build data pipelines independently without IT experts’ help.

Transformation Options

All ETL tools include transformation options, though their complexity varies from one solution to another. Some offer simple transformations (cleansing, standardization, etc.), while others provide complex multistage operations (duplicate removal, lookup, etc.).

Manual Input Reduction

Another benefit of modern ETL solutions is their advanced automation options. They simplify data management, eliminating the manual input and minimizing the probability of human error.

Scheduling

Data integration tools usually contain scheduling options for regular data transfers. That way, you can select specific intervals at which data will travel from selected sources to Snowflake. Scheduling options ensure that your centralized repository always contains the freshest data ready for reporting tasks.

Scalability

When businesses rapidly grow, they experience spikes in data loads as well. At the same time, companies may experience drops in data volumes due to external economic factors, for instance. Luckily, modern data platforms can handle changing requirements to data automatically, with the possibility of scaling up and down upon request.

Monitoring

Snowflake ETL tools embed logging and error-handling features for a better overview of the integration results. They also send notifications via email or SMS to help users address any issues quickly.

4 Things to Consider When Selecting ETL Tools for Snowflake

There are various ETL tools for Snowflake in the market these days. Thus, it might be confusing and unclear which one would suit you best. In the table below, find the CUPS criteria list you need to consider when evaluating data integration tools and selecting the one for your organization.

- Сonnectivity. Verify whether the ETL solution of interest has pre-built connectors to Snowflake and other data sources you regularly use in your business workflows.

- Usability. Decide whether a chosen server is easy to use. See whether it offers a zero-code interface or requires programming expertise and compare it with your team’s qualifications and needs for building data pipelines.

- Pricing. Explore the ETL software’s pricing models and see which one matches your budget.

- Scalability. Investigate how a chosen tool can handle changing data volumes without impacting performance.

Top 10 Snowflake ETL tools

Let’s see in detail what the most popular choices for the ETL Snowflake solutions are. Let’s start with the comparison table.

| Platform | G2 Rating | Pros | Cons |

|---|---|---|---|

| Skyvia | 4.8 out of 5 | – no-code interface – 200+ data sources – data manipulation features – automatic schema detection and mapping | – no phone support |

| Integrate.io | 4.3 out of 5 | – drag-and-drop, no-code interface – 150+ pre-built connectors | – error messages are unclear – debugging is time-consuming |

| Apache Airflow | 4.5 out of 5 | – create ETL pipelines with Python | – requires Python coding skills |

| Matillion | 4.4 out of 5 | – data transformations can be performed with SQL queries or via GUI | – lack of documentation |

| Stitch | 4.4 out of 5 | – custom connectors to data sources | – lacks data transformation options |

| Fivetran | 4.2 out of 5 | – data transformation options with dbt Labs – data governance options | – lack of data management options |

| Hevo Data | 4.4 out of 5 | – 150+ built-in connectors | – no way to organize or categorize data pipelines |

| Airbyte | 4.5 out of 5 | – 400+ connectors with the possibility of customization | – limited transformation capabilities |

| StreamSets | 4.0 out of 5 | – 50+ data systems – custom data sources and processors with JavaScript, Groovy, Scala, etc. | – library dependency issues |

| Astera | 4.3 out of 5 | – pre-built transformation functions | – resource-intensive |

Skyvia

Skyvia is a cloud-based data integration platform for a broad range of tasks. It provides a comprehensive solution for data integration along with tools for backup, query, and workflow automation.

Let’s explore the Data Integration product in detail and see how it can help you with moving data from and to Snowflake.

- Import is a wizard-based tool that allows you to construct ETL and Reverse ETL pipelines, apply data transformations, and map source and destination data structures without coding.

- Export is a zero-code visual tool that helps you export data from Snowflake into a CSV file and save it either on a storage platform or on your computer.

- Synchronization is a no-code solution that supports bi-directional data sync between SaaS apps and databases, ensuring that data remains consistent across platforms.

- Replication is a wizard-based tool that allows you to build ELT pipelines to copy data from source apps to a database or a data warehouse without coding.

- Data Flow is a visual pipeline builder that allows you to design more complex data pipeline diagrams with compound transformations. With this tool, it’s possible to integrate multiple data sources into a pipeline and implement multistage data transformations.

- Control Flow is a visual designer that allows you to orchestrate complex integration scenarios by managing the execution order of various tasks, including data import, export, replication, and synchronization.

Reviews

G2 Rating: 4.8 out of 5 (based on 200+ reviews).

Best for

- Businesses of any size and any industry.

Key features

- A no-code interface allows users to construct data pipelines visually.

- Simple and advanced data integration scenarios.

- Skyvia supports over 200 data sources (CRM systems, e-commerce platforms, payment processors, databases, data warehouses, marketing automation platforms, etc.).

- Data manipulation features (sorting, filtering, searching, expressions, and others) to enhance data accuracy.

- Web-based access from any browser and platform.

- Automatic schema detection and mapping.

Disadvantages

- Data volume and update frequency limitations in the Freemium plan.

- No phone support yet.

Pricing

Skyvia offers a variety of pricing plans, from a free tier to complex enterprise solutions. The price for the Data Integration subscription starts at $79 per month.

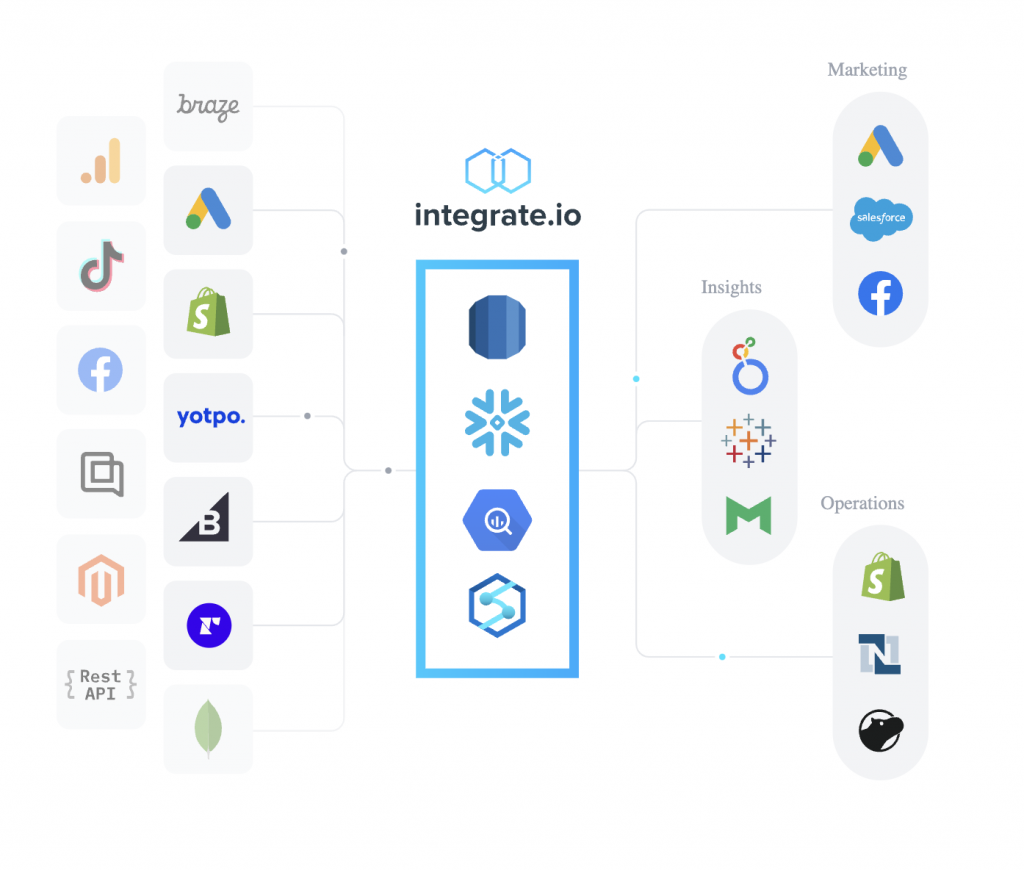

Integrate.io

Integrate.io is a no-code platform that allows users to connect with multiple data systems in the cloud. This tool allows you to visually design data pipelines on a diagram by dragging and connecting components – sources, transformations, and destinations. It supports ETL, reverse ETL, ELT, CDC, API generation, etc.

Integrate.io offers advanced customization options for development. You can easily design pipelines for batch and real-time data processing, applying the needed transformations on the go.

Reviews

G2 Rating: 4.3 out of 5 (based on 200+ reviews).

Best for

- Best suited for organizations with complex data integration and transformation needs.

Key features

- The drag-and-drop, no-code interface simplifies the process of data pipeline creation. It allows users to set up connections and define transformations visually.

- There are 150+ pre-built connectors, including Snowflake and SaaS services.

- Integrate.io provides 360-degree support assistance for users via chat, email, phone, and Zoom.

Disadvantages

- Debugging can be time-consuming since it requires a detailed check of logs.

- Error messages are unclear.

Pricing

There are four different pricing plans for this solution, and each considers cost per credit, feature set, expected data volume, and some other principal aspects.

Apache Airflow

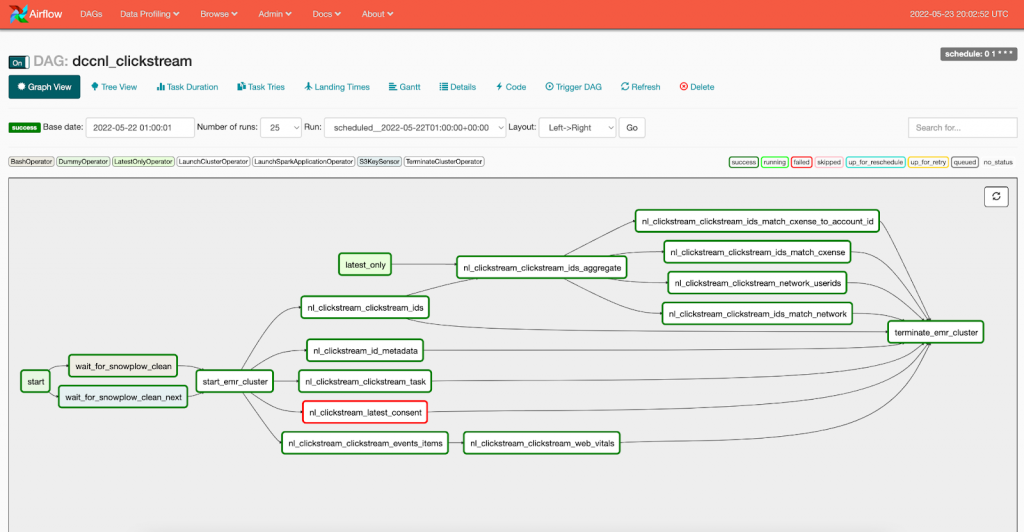

Apache Airflow is an open-source platform suitable for creating dataflows with batch processing. It allows users to set up and manage data pipelines programmatically while the system automates and monitors integration processes.

Airflow implements data pipelines within Direct Acyclic Graphs, also known as DAGs. Each DAG comprises multiple tasks that can be arranged as needed. It’s also possible to establish cross-task dependency by defining the execution logic.

Reviews

G2 rating: 4.5 out of 5 (based on nearly 100 reviews).

Best for

- Companies with a complex business logic orchestrated in Python.

Key features

- Allows users to create and manage ETL pipelines with Python.

- Supports cloud data warehouses like Snowflake and on-premises data sources.

- It can be easily integrated with version control systems like Git.

Disadvantages

- Requires Python coding skills and technical expertise.

- It’s difficult to modify data pipelines once they are launched.

Pricing

Since Apache Airflow is an open-source tool, it can be installed and used for free.

Matillion

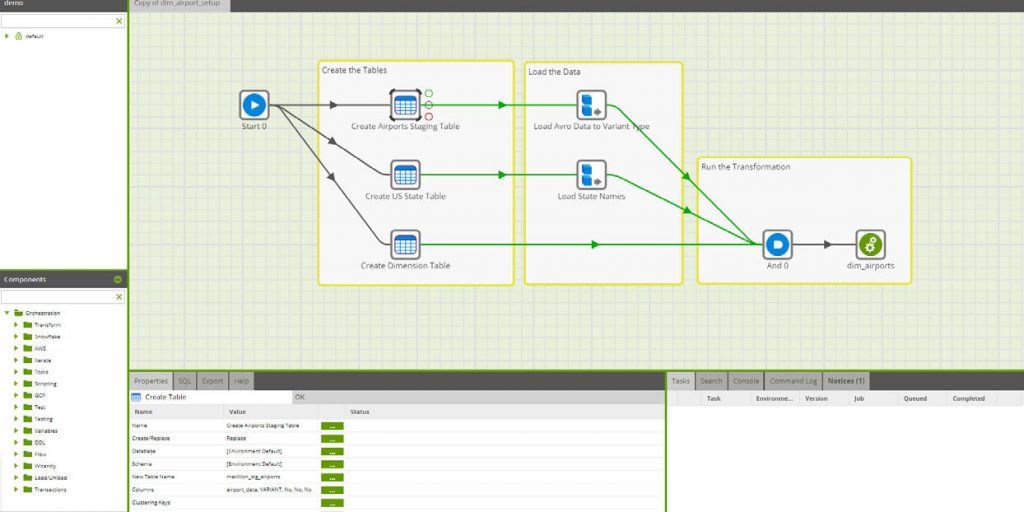

Matillion is a cloud-native data integration tool that provides an intuitive user interface to develop data pipelines. There are two products Matillion offers: Data Loader for moving data from any service to the cloud and Matillion ETL to define data transformations and build data pipelines on the cloud.

Matillion ETL is a fully-featured data integration solution for creating ETL and ELT pipelines within a drag-and-drop interface. This tool can be deployed on your preferred cloud provider.

Reviews

G2 rating: 4.4 out of 5 (based on 80 reviews).

Best for

- Better for enterprises than SMBs.

Key features

- Provides connectors to both cloud and on-premises data systems.

- Contains features for data orchestration and management.

- Data transformations can be performed either with SQL queries or via GUI by creating transformation components.

Disadvantages

- Lack of documentation describing features and instructions for their configuration.

- There is no option to restart tasks from the point of failure. The job needs to be restarted from the beginning.

Pricing

The cost of Matillion ETL is credit-based, meaning that it depends on the data units processed.

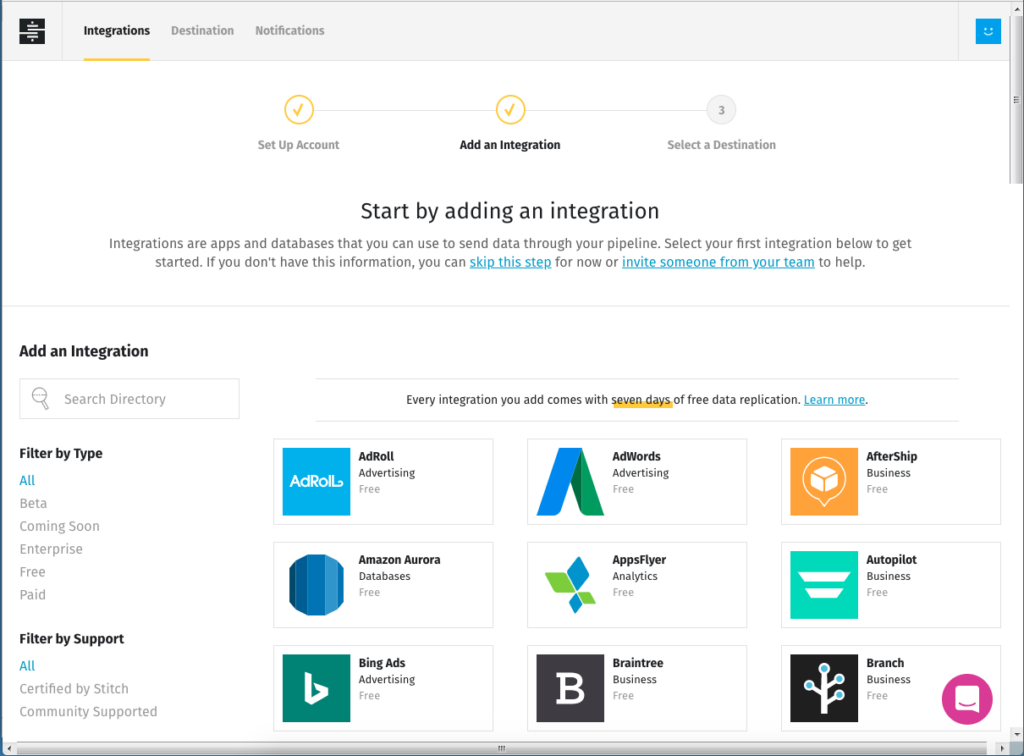

Stitch

Stitch offers an extensible data integration platform used to connect to many databases and SaaS applications. It also comprises advanced and enterprise features, including alerts on Slack, notifications on DataDog, etc.

This solution provides an easy-to-use orchestration tool to create and monitor your data pipelines on the go. Once data is extracted from the source, Stitch saves and encrypts it in the internal pipelines. Then, it performs basic transformations compatible with the target system and loads data to the destination.

Reviews

G2 Rating: 4.4 out of 5 (based on 60+ reviews).

Best for

- Perfect for developers in small to medium-sized businesses.

Key features

- Allows you to create custom connectors to data sources.

- Provides graphical UI to set up Stitch and configure ETL pipelines.

- Includes a dashboard for data pipeline tracking and monitoring.

- Schedules data loading at predefined times.

Disadvantages

- Lacks data transformation options.

- Supports only several data destinations, depending on the selected subscription.

Pricing

Stitch offers three pricing tiers. The cost starts at $100 per month.

Fivetran

Fivetran is an automated data movement platform that allows users to extract data from 500+ sources and load it into a data warehouse. This service is a good option for data consolidation in Snowflake, making it a single source of truth.

Fivetran relies on automation to effectively handle schema changes, significantly minimizing manual input. This makes it a popular choice for streamlined data replication with the ELT approach.

Reviews

G2 Rating: 4.2 out of 5 (based on 380+ reviews).

Best for

- Mid-size and large enterprises in the financial industry.

Key features

- Implements data governance options for role-based access controls and metadata sharing.

- Provides data transformation options with dbt Labs.

- Ensures advanced security with private networks, column hashing, and other approaches.

- Offers automated pipeline setup and monitoring with minimal maintenance.

Disadvantages

- Limited support for Apache Kinesis and Apache Aurora data services.

- Lack of data management options.

Pricing

Fivetran provides four pricing plans, including a free tier. The price depends on the data volumes, number of users, available features, and connectors.

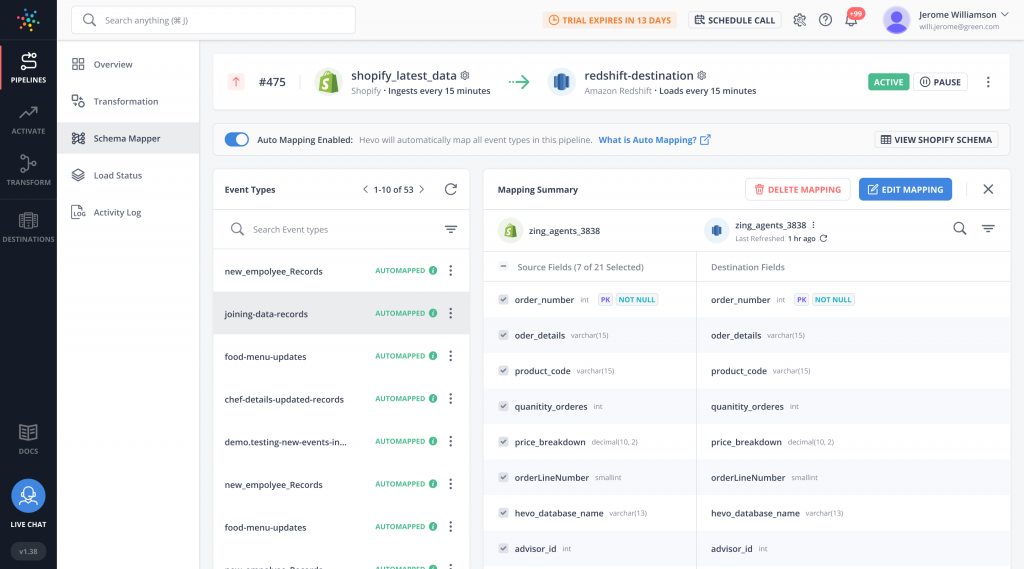

Hevo Data

Hevo Data is a SaaS ETL tool that allows users to create data pipelines without code. It helps them quickly load data from different sources to Snowflake, applying transformations on the go. This platform comprises both visually configurable transformation blocks and Python code-based transformations.

Hevo Data also automatically detects and maps the source schema to the destination. It can handle schema drift, signaling that the data structure changes on the source. All this tends to reduce the manual input for schema management.

Reviews

G2 Rating: 4.4 out of 5 (based on 200+ reviews).

Best for

- Companies with limited technical resources can benefit from Hevo’s no-code interface.

Key features

- Anonymizes data before loading it to the destination.

- Provides 150+ built-in connectors to databases, SaaS platforms, etc.

- Near real-time data loading to certain destinations.

Disadvantages

- There is no way to organize or categorize data pipelines.

- Although Hevo Data supports real-time data streaming, latency can sometimes be an issue, especially for large datasets.

Pricing

Hevo Data offers four pricing plans, including a free tier with up to 1M events per month. For paid plans, the price starts at $239 per month.

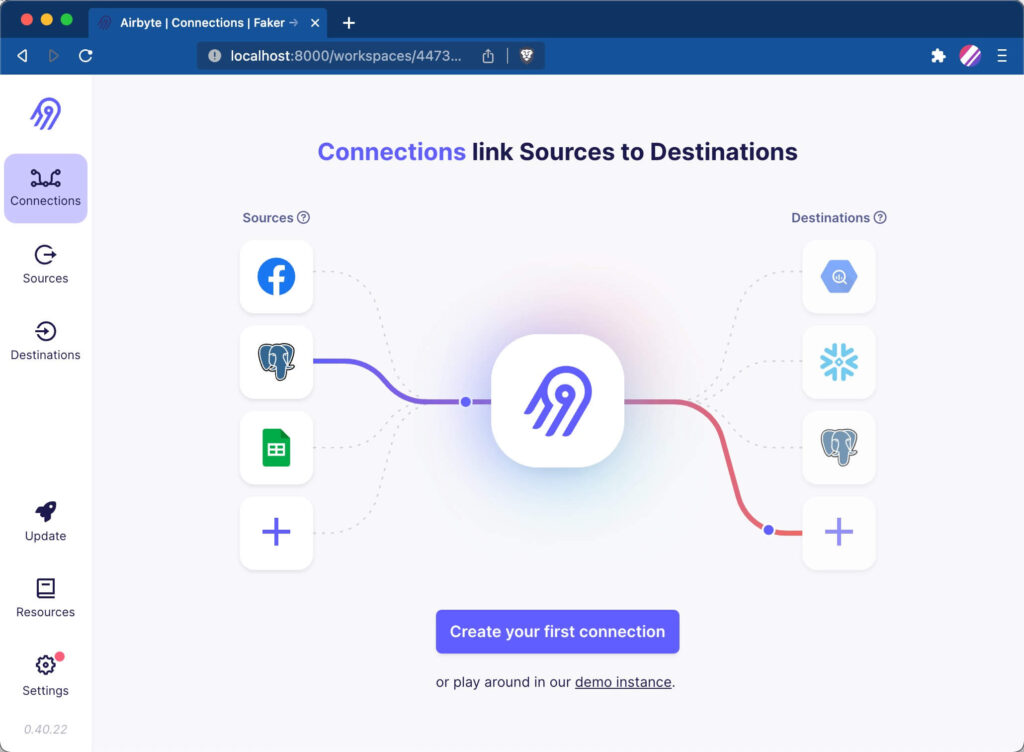

Airbyte

Airbyte is an open-source data movement platform that can be used for Snowflake ETL. This tool also has a large number of contributors who create custom connectors.

Airbyte also offers flexible deployment options that are suitable for businesses with different infrastructure configurations. However, this solution primarily focuses on Extract, Load, Transform (ELT), which makes it suitable for those who need to load raw data into Snowflake.

Reviews

G2 rating: 4.5 out of 5 (based on nearly 50 reviews).

Best for

- It’s an open-source solution suitable for both SMBs and enterprises.

Key features

- Offers over 400+ connectors with the possibility of customization.

- Supports incremental data loading.

- Provides detailed logging and error detection options.

Disadvantages

- Limited transformation capabilities.

- Data-intensive pipelines can be resource-consuming and thus costly.

Pricing

Open-source deployment on your host is free. The cost of the cloud-hosted deployment can be discussed with sales.

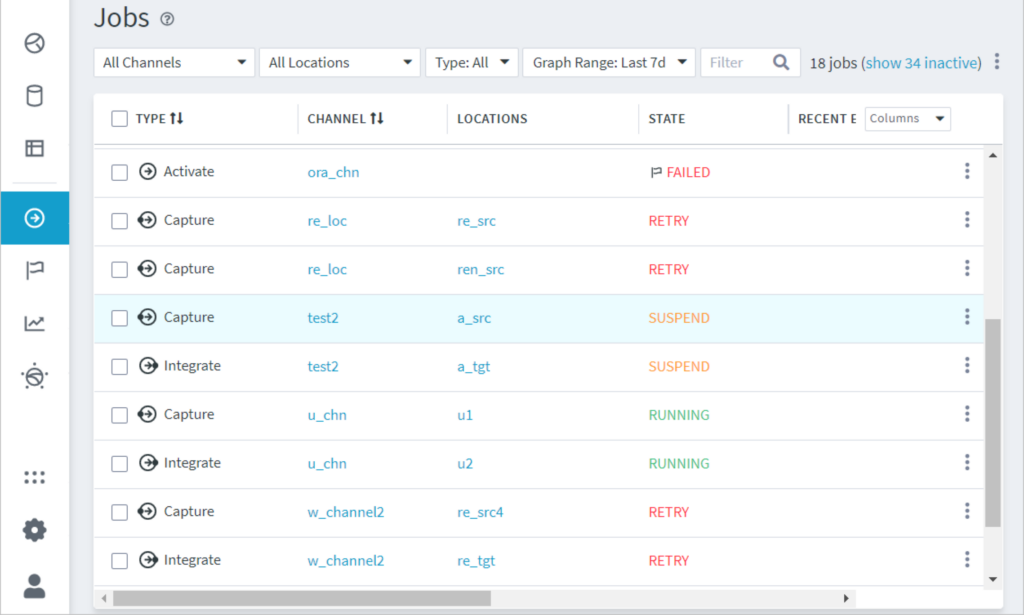

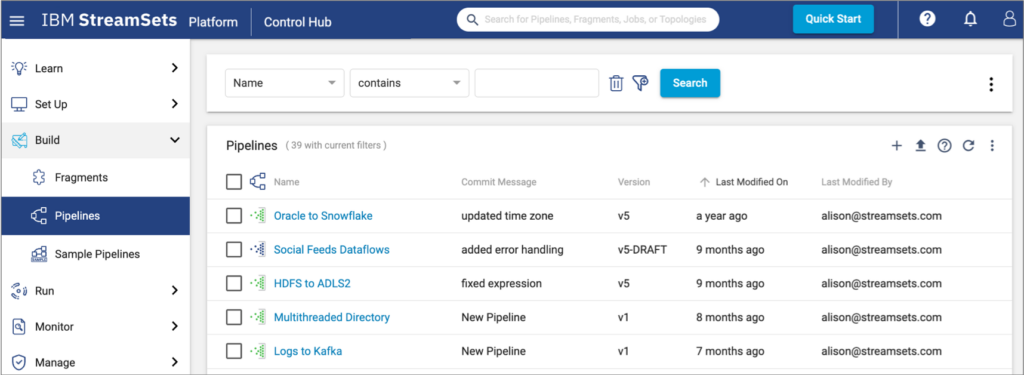

StreamSets

StreamSets is a cloud-based, fully managed ETL tool for Snowflake developed by IBM. It allows users to create and manage smart streaming data pipelines using a graphical user interface. StreamSets supports integration across hybrid and multi-cloud environments.

Reviews

G2 rating: 4.0 out of 5 (based on nearly 50 reviews).

Best for

- Perfect for large enterprises.

Key features

- Provides the possibility to add custom data sources and processors with JavaScript, Groovy, Scala, etc.

- Has extensive documentation with a thorough description of product functionality.

- Supports 50+ data systems, including streaming sources Kafka and MapR.

Disadvantages

- Lacks extensive coverage of SaaS input sources.

- Copying the same pipelines to different servers might cause library dependency issues.

Pricing

The cost of StreamSets can be discussed with IBM’s sales managers.

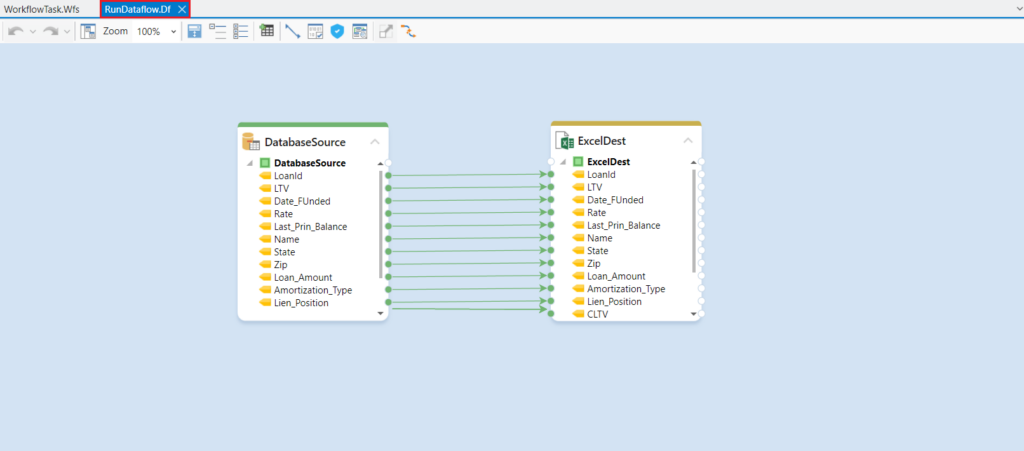

Astera

Astera is a comprehensive data management platform designed for various data-related operations. It allows users to build ETL scenarios involving 50+ connectors to cloud apps, databases, and data warehouses.

This tool also provides a wide range of features to automate data pipelines and improve data accuracy. For instance, Astera offers a library of built-in transformations and data mapping features. It also provides scheduling options that enable jobs to run in intervals.

Reviews

G2 rating: 4.3 out of 5 (based on nearly 50 reviews).

Best for

- Best suited for SMBs and enterprises.

Key features

- Has built-in connectors to databases, cloud storage, flat files, and data warehouses, including Snowflake.

- Offers pre-built transformation functions for shaping data with no code.

- Applies AI-powered and role-based mapping.

- Provides automated quality data management to prepare data before loading it into Snowflake.

Disadvantages

- The steep learning curve for non-technical users.

- This tool can be resource-intensive, especially when handling large datasets or complex data transformations.

Pricing

The Astera prices are discussed with their sales representatives.

How to Import Data into Snowflake Using Skyvia in Minutes?

Let’s explore an example of configuring an integration pipeline using one of the Snowflake ETL tools – the Skyvia platform.

Note: Skyvia supports both ETL and ELT workflows, so you can select the scenarios that currently meet your business requirements.

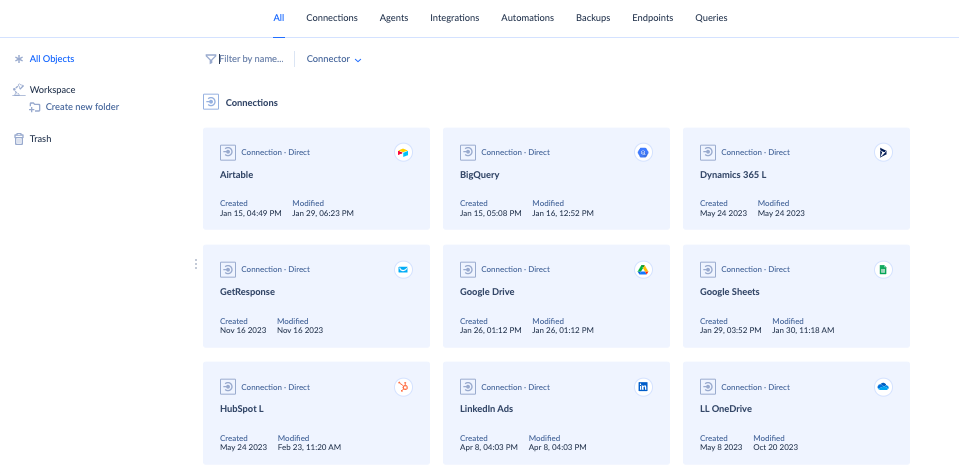

Step 1. Configure Source Connector

Skyvia supports over 200+ data sources, including cloud apps, databases, data warehouses, storage systems, and flat files. To set up the required connectors, take the following steps:

- Log into your Skyvia account.

- Navigate to + Create New -> Connectors.

- Select the tool that interests you and click on it.

- Follow the step-by-step instructions provided within the setup screen of the selected connector.

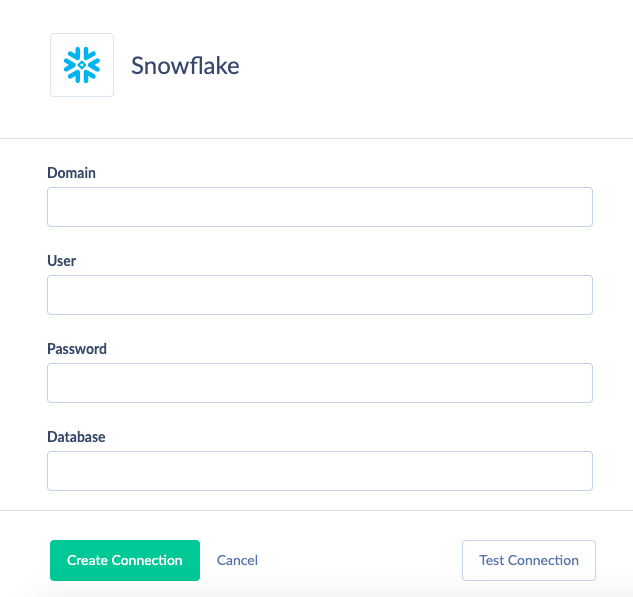

Step 2. Configure Snowflake Connector

In your Skyvia account, go to + Create New -> Connectors and select Snowflake from the list.

You need to specify the following required connection parameters:

- Domain is a Snowflake account domain.

- User is a username with which to log in.

- Password is a password with which to log in.

- Database is a database name.

Snowflake also envisages the following optional connection parameters:

- Schema is a current schema name in the database. Although this parameter is optional, you need to specify it when using replication or import in the Bulk Load mode.

- Warehouse is the name of the warehouse used for a database.

- Role is a role name used to connect.

Step 3. Set up integration scenario

As mentioned above, Skyvia provides several tools for moving data to and from Snowflake. In this example, we use the Replication tool to create an ELT pipeline and transfer data from Salesforce and Snowflake.

- In the top menu, go to +Create New -> Replication.

- Select Salesforce as a source connector and Snowflake as a destination.

- Select the fields for replication.

- Schedule the replication by specifying the exact time and date at which it should take place or indicate the intervals. Make sure that the Incremental Updates checkbox is selected.

- Save the replication and click Run to start it.

- Check the status of the integration scenario in the Monitor tab.

Conclusion

Along with the native ETL capabilities, various third-party data integration tools allow users to extract, load, and transform data into Snowflake. This article has presented some popular ETL tools for Snowflake.

We have also reviewed and compared the ETL and ELT concepts and explored their implementation for Snowflake data integration using Skyvia. Feel free to try Skyvia to extract data from your favorite apps, apply transformations to it, and load it into Snowflake.

FAQ for Snowflake ETL Tools

Does Snowflake use SQL or Python?

Yes, SQL and Python can be used with Snowflake. SQL is often used to query and transform data. Meanwhile, Snowflake Snowpark allows you to use Python directly within Snowflake to process data, run complex data analytics scenarios, and execute machine learning tasks.

What is an ETL pipeline?

An ETL pipeline is a set of coordinated processes for data collection, transformation, and loading. It aims to move data from sources into Snowflake or other data warehouses or databases.

How to build an ETL pipeline in Snowflake?

Feel free to use Skyvia’s Replication and Import to set up data pipelines in a GUI with no coding.