The explosion of data has changed the rules. Every team — from analytics to engineering — is under pressure to unify, clean, and move massive volumes of data faster than ever. And when your infrastructure runs on AWS, the stakes are even higher. Whether you’re storing data in S3, running analytics in Redshift, or managing applications in RDS, your ETL pipeline is the backbone of performance, scalability, and insight.

But here’s the catch: not all ETL tools are created equal — especially in the AWS ecosystem.

Choosing the right AWS ETL tool means balancing performance and cost, ensuring compatibility with your architecture, avoiding vendor lock-in, and picking a platform your team can actually work with. Get it wrong, and you risk bloated costs, pipeline failures, or worse — inaccurate data.

That’s why in this guide, we’ll cut through the noise and give you a side-by-side comparison of the best ETL tools for AWS — including both native services like AWS Glue and third-party platforms like Skyvia, Hevo, and Stitch.

You’ll learn:

- What AWS-native and external ETL tools can do (and where they differ)

- Key selection criteria: speed, ease of use, pricing models, extensibility

- Which tools suit your use case: batch vs real-time, SQL vs no-code, etc.

By the end, you’ll have a clear framework to choose the best ETL tool for your AWS environment — whether you’re running a lean startup or a multi-petabyte enterprise stack.

Table of contents

- What is AWS ETL

- Top AWS ETL Tools: A Comparative Review (2025)

- How to Choose the Best AWS ETL Tool

- Native AWS ETL Tools Included in the ETL Process

- Conclusion

- FAQ

What is AWS ETL

In data processing, Extract, Transform, and Load (ETL) means extracting data from various sources, transforming it to make it usable and insightful, and loading it into a destination like a database, data warehouse, or data lake. A key advantage of starting ETL on the cloud is its scalability and cheaper solutions compared to their on-premise counterparts.

Amazon Web Services (AWS) provides many native services for extracting, transforming, and loading data within the AWS ecosystem. Each tool is designed for different purposes and provides its own set of supported data sources, use cases, and pricing models.

Let’s discuss the most popular native AWS ETL tools in detail and discover their advantages and limitations.

Top AWS ETL Tools: A Comparative Review (2025)

With a growing mix of native AWS services and third-party platforms, choosing the right ETL tool can be overwhelming. This section breaks down the top options, comparing their strengths, limitations, and ideal use cases.

1. Skyvia

Skyvia is a no-code, cloud-based data integration platform that supports ETL, ELT, and reverse ETL — all through a browser. While Fivetran focuses primarily on ELT and AWS Glue targets enterprise Spark-based workloads, Skyvia stands out with its intuitive visual interface, wide connector coverage (200+), and all-in-one integration toolkit for AWS and SaaS ecosystems. It’s especially suited for teams without deep data engineering resources who still need robust ETL capabilities.

Pros

- No-code visual interface with drag-and-drop pipeline builder

- Supports 200+ connectors, including AWS Redshift, RDS, and S3

- Includes reverse ETL and cloud backup features — unlike Fivetran, which focuses only on ELT

- Free tier available with full-featured functionality for small-scale needs

Cons

- Doesn’t support real-time streaming; best suited for scheduled syncs

- Advanced features like custom scripting and logging require a Professional plan

- Fewer community tutorials and templates than older, enterprise-focused platforms

Pricing

Starts at $79/month for Standard Data Integration

Professional plans start at $199/month, and a tailor-made offer is available for enterprise needs.

Best For

SMBs and mid-sized teams looking for a visual, all-in-one ETL/ELT platform with deep AWS + SaaS integration — especially when dedicated data engineering resources are limited.

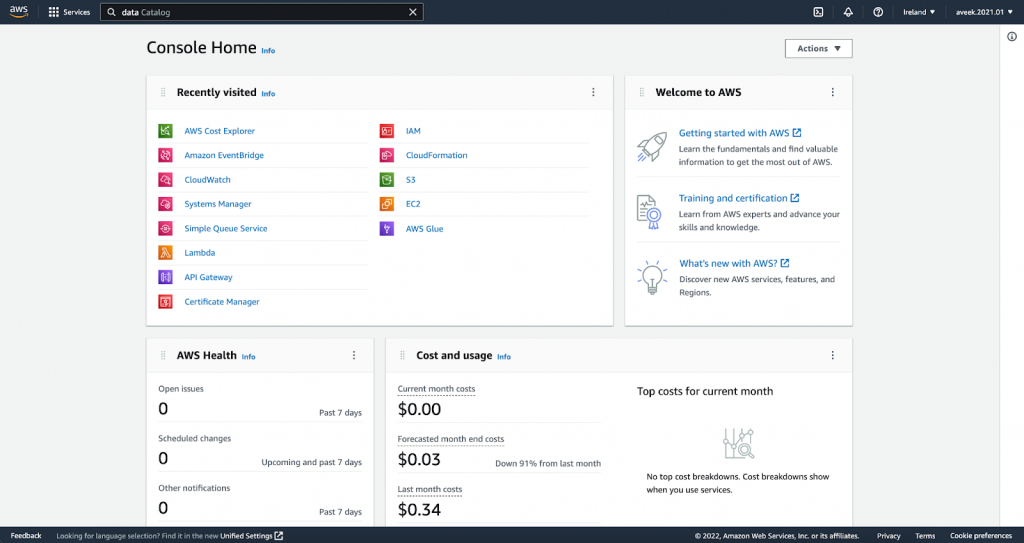

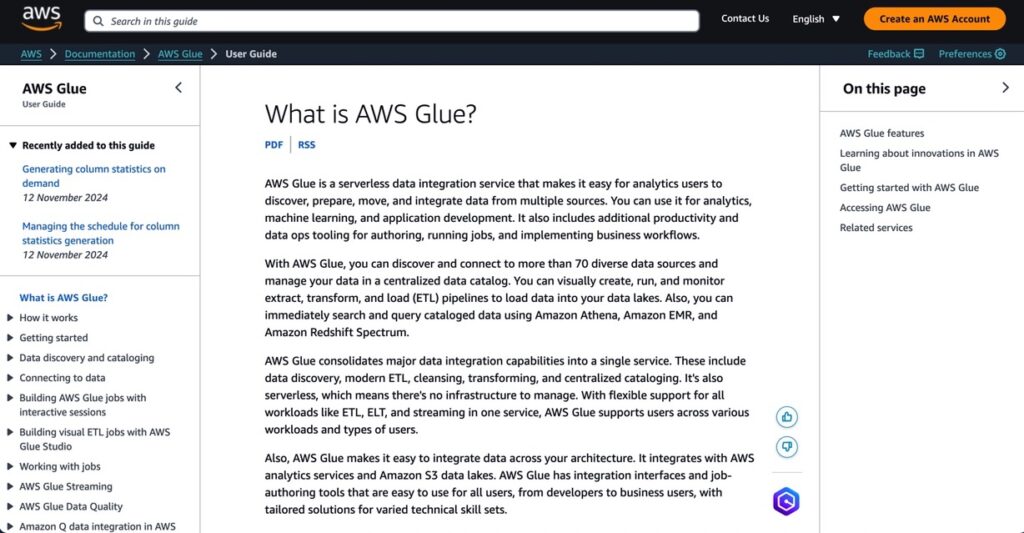

2. AWS Glue

G2 Crowd: 4.2/5

AWS Glue is a serverless, Spark-based ETL service native to the AWS ecosystem. Unlike Skyvia and Fivetran, which offer low-code/no-code interfaces and broader third-party connectivity, Glue is designed for high-performance processing of large datasets within AWS infrastructure. It’s best suited for enterprises already operating at scale and managing complex batch workflows.

Pros

- Deep native integration with 70+ AWS services, including S3, Redshift, Athena, and Lake Formation

- Serverless architecture automatically scales with workloads

- New Spark 3.5 engine in Glue 5.0 improves job performance by ~32%

- Built-in metadata management through Glue Data Catalog

Cons

- Steep learning curve and AWS-specific complexity

- Costs can grow quickly — $0.44 per DPU-hour can add up with large workloads

- No built-in support for reverse ETL or external SaaS destinations

Pricing

Pay-as-you-go model starting at $0.44 per DPU-hour

Glue 5.0 introduces optimizations that can reduce costs by up to 22% compared to earlier versions

Best For

Large-scale enterprises already embedded in AWS needing powerful, serverless ETL with Spark — ideal for batch processing at petabyte scale and tight AWS-native integration.

3. AWS Data Pipeline

G2 Crowd: 4.1/5

AWS Data Pipeline is one of the oldest AWS-native orchestration tools, built for scheduled data movement and transformation. Compared to newer options like AWS Glue or third-party tools like Skyvia, Data Pipeline offers more granular control over scheduling and retries, but lacks modern interfaces, real-time streaming, and SaaS integrations. It’s best for legacy batch workflows with a consistent cadence.

Pros

- Built-in support for AWS-native services: S3, RDS, DynamoDB, Redshift

- Offers advanced scheduling, retry logic, and dependency-based orchestration

- Supports running custom scripts across AWS or on-prem infrastructure

- Cost-effective for low-frequency, batch-style ETL workflows

Cons

- Outdated UI and limited documentation compared to newer AWS tools like Glue

- Doesn’t support modern streaming use cases or real-time data sync

- Monitoring and logging require manual configuration

- Slower development pace; not ideal for long-term futureproofing

Pricing

$1.00/month for low-frequency pipelines; $3.00/month for high-frequency ones

Additional AWS resource usage (EC2, S3, etc.) billed separately

Best For

Teams already committed to AWS, looking for reliable, low-cost batch ETL orchestration that doesn’t require real-time delivery or frequent scaling.

4. Fivetran

G2 Crowd: 4.2/5

Fivetran is a fully managed ELT-first platform that emphasizes speed, simplicity, and automation. Unlike AWS Glue, which requires Spark knowledge, or Skyvia, which allows transformation before load, Fivetran pushes raw data into warehouses like Redshift first — leaving downstream tools to transform. It shines for its maintenance-free connectors and seamless schema evolution, but lacks the transformation flexibility of visual ETL tools.

Pros

- Fully managed SaaS platform with zero-maintenance pipeline setup

- Extensive library of prebuilt connectors for SaaS tools, databases, and data warehouses

- Supports ELT with automatic schema mapping and transformation staging

- Robust incremental syncing and automated error resolution

Cons

- Pricing based on Monthly Active Rows (MAR) can scale quickly with frequent updates/deletes

- Less flexibility for custom transformations compared to visual ETL tools like Skyvia

- Some connectors may lag behind in supporting newer APIs or custom fields

- Reverse ETL and data export workflows are limited

Pricing

Starts at ~$120/month for low-volume usage

Enterprise plans vary based on usage and support needs; can reach several thousand per month at scale

Best For

Organizations with a mature data stack prioritizing automation, reliability, and low-lift integration — especially for syncing cloud apps into Redshift or Snowflake.

5. Stitch

G2 Crowd: 4.4/5

Stitch is a lightweight, cloud-native ELT tool built for simplicity and speed. Compared to more comprehensive platforms like Talend or Skyvia, Stitch focuses on data ingestion — especially useful for small to mid-sized teams wanting fast, no-fuss replication into AWS services like Redshift and S3. While it lacks deep transformation or data governance features, it stands out with its transparent pricing, speed to deploy, and strong compliance credentials.

Pros

- Easy-to-use, no-code setup with over 140 supported connectors

- Predictable row-based pricing model — unlike usage-based models with unpredictable costs

- SOC 2 Type II, HIPAA, GDPR, and ISO 27001 compliant — suited for regulated industries

- Based on the open-source Singer standard, allowing extensibility with custom connectors

- 14-day full-featured free trial and detailed documentation

Cons

- Minimal built-in transformation; better suited for ELT pipelines — complex logic needs external tools

- No support for row-level filtering or advanced schema validation

- Some connectors may experience sync lags with large data volumes or changing APIs

- No free tier beyond trial; entry pricing may deter smaller teams

Pricing

- Standard Plan: $83.33–$100/month (up to 5M rows)

- Advanced: $1,250/month

- Premium: $2,500/month (annual billing)

- Enterprise: Custom pricing

- Free Trial: 14 days (no credit card)

Best For

Data teams prioritizing simplicity, compliance, and broad SaaS/app connector coverage over complex transformation needs. Ideal for mid-sized companies replicating data to AWS Redshift or S3 on a regular cadence.

6. Hevo Data

G2 Crowd: 4.3/5

Hevo Data is a fully managed, no-code ETL/ELT tool focused on real-time data replication. Unlike Stitch or Skyvia, which primarily support scheduled loads, Hevo’s change data capture (CDC) support makes it ideal for low-latency AWS pipelines. It combines flexibility and real-time sync with solid monitoring, schema handling, and automated transformations — though complex logic might still require scripting.

Pros

- Real-time sync with automated schema mapping and CDC

- Supports 150+ data sources including AWS, GCP, SaaS apps, and databases

- Built-in transformation layer with basic enrichment capabilities

- Robust monitoring, error handling, and alerting dashboard

- No-code interface, yet allows advanced data flow configuration

Cons

- UI can get cluttered in advanced multi-source/multi-destination pipelines

- Advanced transformations may require external logic or scripting

- Pricing can scale rapidly with many connectors or large event volumes

Pricing

- Free Tier: 1M events/month

- Starter Plan: ~$239/month (20M events/month)

- Business & Enterprise: Custom quotes based on volume, SLA, and support

- Offers scaling based on events, not rows — good for sync-heavy use cases

Best For

Teams needing real-time or near-real-time data pipelines into AWS services like Redshift, S3, or Snowflake — especially for analytics dashboards and live ops monitoring. Strong fit for data-driven orgs without in-house ETL engineering.

7. Talend Data Fabric

G2 Crowd: 4.4/5

Talend Data Fabric is a comprehensive data integration and governance suite, designed for enterprises with complex, regulated, or hybrid cloud environments. Unlike Skyvia or Stitch, Talend provides built-in data quality, MDM, and lineage tools — making it suitable for teams needing end-to-end data oversight. While powerful, it comes with a steep learning curve and enterprise-grade pricing.

Pros

- Complete data management suite: ETL + Data Quality + Governance + MDM

- Supports multi-cloud and hybrid deployments across AWS, Azure, GCP, and on-prem

- Strong data transformation capabilities, including cleansing, masking, and enrichment

- Includes data lineage and audit trails, critical for compliance-heavy industries

- Backed by a large open-source community (Talend Open Studio) and enterprise support

Cons

- Higher complexity and longer onboarding time compared to no-code tools like Hevo or Skyvia

- Total cost of ownership is high — includes licensing, infrastructure, and support costs

- Some features gated behind premium tiers or available only in enterprise edition

Pricing

- No public pricing; contact sales for custom quotes

- Talend Open Studio (free, open source) available with limited support/features

Best For

Enterprises needing deep transformation, strict data governance, and hybrid cloud control — especially in regulated industries (finance, healthcare, manufacturing) with compliance and audit requirements.

8. Informatica

G2 Crowd: 4.4/5

Informatica is an enterprise-grade platform with decades of data integration expertise. Unlike open-source options like Airbyte or lightweight solutions like Stitch, Informatica excels in complex, governed ETL/ELT pipelines for large-scale enterprises. Its suite includes AI-powered automation, granular security controls, and deep metadata management — ideal for hybrid and multi-cloud environments requiring bulletproof compliance and end-to-end governance.

Pros

- Proven enterprise leader with robust ETL and data governance capabilities

- Extensive connector ecosystem covering AWS, SaaS, legacy, and on-prem systems

- Advanced features for data quality, lineage, masking, and cataloging

- Highly scalable and secure, built for enterprise and regulated industries

Cons

- Steep learning curve and complex architecture not suited for smaller teams

- High total cost of ownership; requires dedicated IT/data ops resources

- Some modules/features are gated behind premium tiers

Pricing

- Contact sales for quote

- Cloud Integration entry-level plans start around $2,000/month

- Free trials available for select products

Best For

Large enterprises that require robust ETL + governance + security, particularly across hybrid or multi-cloud deployments. Ideal for regulated sectors like healthcare, finance, or government.

9. Integrate.io

G2 Crowd: 4.3/5

Integrate.io is a no-code/low-code ETL platform that emphasizes ease of use for cloud data movement. While Talend offers more enterprise-grade governance, Integrate.io shines with its drag-and-drop simplicity and support for real-time streaming, which makes it appealing to data teams without heavy engineering overhead. It’s a flexible middle ground between lightweight tools like Stitch and heavyweight platforms like Informatica.

Pros

- Intuitive visual interface with no-code pipeline builder

- Supports 140+ data sources, including AWS, cloud apps, and SQL/NoSQL databases

- Handles both batch ETL and real-time streaming workflows

- Transparent pricing model with usage-based scaling

- Built-in transformation and enrichment capabilities

Cons

- Advanced custom workflows may require scripting or API workarounds

- UI can feel constrained for very complex, branching data logic

- Pricing can escalate with high-volume or high-frequency jobs

Pricing

- Starts at $1,999/month

- Free trial available

- Usage-based scale-up available for high-volume needs

Best For

SMBs and mid-market orgs seeking a visual, low-maintenance ETL tool to move data into AWS services (Redshift, S3, etc.) — without building pipelines from scratch.

10. Airbyte

G2 Crowd: 4.5/5

Airbyte is an open-source ELT platform known for its flexibility and rapidly expanding connector library. Unlike Skyvia or Hevo, which are fully managed SaaS platforms, Airbyte provides self-hosted and cloud options — giving teams full control over connectors, transformations, and deployment. It’s a go-to solution for organizations with dev resources looking to avoid vendor lock-in and build customized integrations at scale.

Pros

- Open-source and self-hosted by default — free to use and fully customizable

- 350+ connectors and counting, with a strong developer community

- Supports CDC, incremental sync, and ELT into AWS targets

- Cloud-managed option available for teams without DevOps overhead

Cons

- Self-hosted version requires infrastructure management and monitoring

- Cloud version is newer and still maturing in terms of enterprise features

- Some connectors may lack full parity with commercial vendors

Pricing

- Open Source: Free (self-hosted)

- Cloud: Starts at $2.50/credit; includes free tier for low-volume usage

- Enterprise: Custom pricing available

Best For

Teams seeking open-source extensibility, or companies with unique connector needs not met by proprietary tools. Ideal for orgs that want to own their ETL pipeline architecture and scale affordably with internal DevOps support.

How to Choose the Best AWS ETL Tool

Selecting the right AWS ETL tool is more than a technical decision — it’s a strategic choice that can shape your data infrastructure’s scalability, agility, and total cost of ownership. In 2025, with more tools and hybrid architectures than ever, it’s crucial to align your choice with your data volumes, team capabilities, and long-term goals.

Here are the key factors to consider when evaluating your ETL options:

1. Assess Your Data Volume and Load Frequency

Start with the basics: how much data do you process, and how often?

- If you’re working with real-time data streams (e.g., IoT sensors, in-app user events), prioritize ETL tools with streaming or near real-time ingestion support like Hevo or Airbyte.

- For scheduled batch jobs, such as daily sales reports or weekly data syncs, tools like AWS Glue or Skyvia may offer a better price-performance balance.

The right tool should scale seamlessly as your data grows — without hitting performance bottlenecks.

2. Match Tools to Transformation Complexity

Not all ETL pipelines are created equal.

- If your workflows involve simple data cleaning, type conversions, or formatting, lightweight tools with visual designers (e.g., Skyvia, Stitch) will suffice.

- For complex joins, aggregations, or advanced logic, choose platforms like Informatica or Talend, which offer powerful transformation engines and support for custom scripting.

Some tools are ELT-first (like Fivetran), while others offer true in-pipeline ETL, which may better suit data governance and compliance workflows.

3. Map Your Data Sources and Destinations

Where your data lives — and where it’s going — matters.

- If you’re fully embedded in AWS (Redshift, S3, RDS), native services like Glue or Data Pipeline offer tight integration and minimal friction.

- For hybrid or multi-cloud environments, look for tools with a broad range of connectors (e.g., Hevo, Airbyte, Skyvia) and support for cross-platform transfers.

Choose based on current architecture and future platform flexibility.

4. Prioritize Ease of Use vs. Customization

Evaluate your team’s skill set and bandwidth.

- No-code or low-code tools like Integrate.io, Skyvia, or Hevo are great for teams with limited data engineering resources.

- Developer-focused platforms like Airbyte or AWS Glue offer deeper control but require more technical overhead.

The right balance depends on whether your team wants rapid delivery or fine-tuned customization.

5. Understand the True Cost of Ownership

ETL pricing models vary widely — by row, by event, by compute usage.

- Tools like Stitch and Fivetran price by Monthly Active Rows or events, which can escalate rapidly with high-volume updates.

- Others like AWS Glue charge per DPU-hour, while Skyvia uses predictable flat-tier pricing.

Always consider not just base cost, but also scaling behavior, support costs, and overage risks.

6. Check Monitoring, Alerting, and Error Handling

When something breaks — and it will — visibility is key.

- Look for tools with built-in dashboards, logging, and retry mechanisms.

- Platforms like Hevo and Skyvia offer intuitive error tracking and alerting, while Airbyte’s open-source model gives full control over log pipelines.

This is essential for production-grade pipelines and SLA compliance.

7. Decide If You Need Real-Time Processing

If your use case involves live dashboards, customer behavior modeling, or fraud detection, batch ETL won’t cut it.

- Choose tools with real-time CDC (Change Data Capture) capabilities, such as Hevo, Airbyte, or Fivetran (for certain sources).

For less time-sensitive workloads, scheduled batch ETL tools may be more cost-efficient.

8. Evaluate Infrastructure Control Needs

Do you want to control the compute layer or let the tool handle it?

- Serverless options like AWS Glue minimize infrastructure management.

- If you prefer custom compute tuning or need to optimize cost/performance trade-offs, consider tools like Talend or self-hosted Airbyte.

This choice often depends on your organization’s DevOps maturity and compliance requirements.

9. Verify Integration with Storage and BI Tools

Your ETL tool should fit cleanly into your broader data stack.

- Ensure compatibility with AWS-native storage (S3, Redshift, RDS) and analytics platforms (Athena, QuickSight, etc.).

- Some tools, like Skyvia and Hevo, also offer reverse ETL, enabling you to sync transformed data back into operational systems.

Native AWS ETL Tools Included in the ETL Process

For organizations working primarily within the AWS ecosystem, native ETL tools offer tight service integration, scalability, and cost-efficiency — especially when building pipelines around Redshift, S3, or RDS. Here are five core AWS-native tools commonly used in ETL workflows, along with their ideal usage scenarios:

1. AWS Glue

Best for: Serverless, large-scale ETL, and data lake preparation

AWS Glue is AWS’s flagship ETL tool, purpose-built for transforming, cleaning, and moving data across the AWS stack. It automatically catalogs source data using the Glue Data Catalog and leverages Apache Spark under the hood for distributed processing.

Glue is serverless, so it handles job orchestration, scaling, and infrastructure, reducing DevOps overhead. It’s especially useful for preparing semi-structured data (JSON, Parquet, etc.) from S3 into analytics-ready formats for Redshift, Athena, or SageMaker.

When to use it:

- Batch ETL at scale

- Building data lakes or pipelines for ML/BI

- Transforming large semi-structured datasets

2. AWS Glue DataBrew

Best for: No-code data prep for analysts and citizen data users

AWS Glue DataBrew brings data transformation to non-technical users through a visual, no-code interface. With over 250 built-in operations (deduplication, normalization, joins, etc.), users can prep data without writing a line of code.

It integrates seamlessly with the Glue Data Catalog, S3, Redshift, and SageMaker — making it a great option for cleaning datasets before feeding them into ML workflows or BI dashboards.

When to use it:

- Analyst-friendly data prep with no-code UX

- Cleaning and standardizing structured or semi-structured data

- Preparing input datasets for machine learning pipelines

3. AWS Data Pipeline

Best for: Scheduled batch workflows with AWS-native orchestration

AWS Data Pipeline is a workflow automation tool for orchestrating ETL jobs across AWS and on-prem systems. You can schedule tasks, define dependencies, and add retry logic — similar to tools like Apache Airflow, but tightly coupled with AWS.

While more manual and less modern than Glue, it remains effective for regular batch processes like nightly backups, table syncs, or job chaining across EC2, EMR, RDS, and S3.

When to use it:

- Time-based ETL workflows

- Moving data between AWS services or from on-prem systems

- Lightweight orchestration of SQL scripts or EMR jobs

4. Amazon EMR (Elastic MapReduce)

Best for: Custom, code-heavy big data ETL at scale

Amazon EMR is a managed cluster platform for running open-source big data frameworks like Apache Spark, Hadoop, Hive, and Presto. It offers full control over cluster configuration and supports highly customizable ETL workflows.

Unlike Glue’s abstracted serverless model, EMR is ideal when you need to fine-tune performance, install custom libraries, or migrate existing Spark/Hadoop jobs to AWS.

When to use it:

- Custom big data pipelines with complex transformations

- Migrating legacy Spark/Hadoop ETL jobs to the cloud

- Scenarios requiring full infrastructure control and optimization

5. Amazon Kinesis Data Firehose

Best for: Real-time ingestion and delivery to AWS targets

Kinesis Data Firehose is AWS’s real-time streaming ingestion tool, used to capture and load high-velocity data into S3, Redshift, or OpenSearch. It handles buffering, batching, and retry logic automatically and supports lightweight data transformations using AWS Lambda.

While not a full ETL tool, Firehose is a key component in streaming data architectures — particularly for log collection, clickstream analysis, or IoT event delivery.

When to use it:

- Real-time ingestion of streaming data

- Delivering event data to S3, Redshift, or analytics platforms

- Lightweight transformations at ingestion

Conclusion

There’s no one-size-fits-all solution when it comes to AWS ETL tools. The “best” tool isn’t the one with the most features — it’s the one that fits your data architecture, team skills, use cases, and growth plans.

Native AWS services like Glue, Data Pipeline, and Firehose offer deep integration and serverless scaling for teams already invested in the AWS ecosystem. Meanwhile, third-party platforms like Skyvia, Fivetran, Hevo, and Airbyte provide broader connectivity, intuitive interfaces, and specialized capabilities that can dramatically reduce development time and overhead.

The key is to evaluate tools against the factors we covered — data volume, transformation complexity, ease of use, cost, monitoring, infrastructure control, and integration needs. By doing so, you’ll be better positioned to choose an ETL platform that delivers both immediate results and long-term scalability.

Whether you’re building real-time pipelines, migrating legacy systems, or designing modern data lakes — choosing the right ETL solution is foundational to your AWS data strategy.

FAQ for AWS ETL Tools

What are the limitations of using AWS Glue for ETL tasks?

While AWS Glue is powerful, it has some limitations:

1. Connector Limitations. It primarily supports AWS-hosted data sources and has fewer connectors for non-AWS data sources.

2. Coding Requirements. Advanced transformations may require coding in Python or Scala.

3. Learning Curve. Though Glue Studio simplifies things, fully leveraging the tool’s capabilities can take time.

How is AWS Lambda used for ETL, and what are its benefits and drawbacks?

AWS Lambda is used for ETL tasks that require event-driven processing, such as transforming data in response to file uploads in Amazon S3 or updates in DynamoDB. It’s a serverless tool that automatically scales with the number of requests.

Benefits

It’s serverless, and there is no need to manage infrastructure.

Suitable for real-time data transformations.

Drawbacks

Limited capabilities for full-scale ETL tasks.

Users need to understand how to trigger Lambda functions based on event-driven design.

How do pricing models differ between native AWS ETL tools?

Each AWS ETL tool has a unique pricing model:

1. AWS Glue. Charges based on data processing units (DPUs) used during ETL jobs.

2. AWS Data Pipeline. Pricing depends on the number of tasks and data processing activities.

3. Amazon EMR. Costs vary based on cluster usage, data transfer, and storage.

4. AWS Glue DataBrew. Charges are based on the number of data rows processed.

5. AWS Lambda. Costs are determined by the number of requests and the execution time of each function.

How do the third-party ETL solutions compare to Native AWS Tools?

1. Ease of Use. Third-party solutions like Skyvia and Fivetran offer no-code or low-code interfaces, making them easier for non-technical users compared to some native AWS tools like AWS Glue, which may require scripting for complex transformations.

2. Integration Capabilities. Third-party tools often support a broader range of data sources, including non-AWS platforms. For example, Skyvia and Talend connect to various cloud services, databases, and even on-premises systems, while AWS Glue focuses mainly on AWS-hosted data sources.

3. Customization and Flexibility. Informatica and Talend offer extensive customization for data transformations, while native AWS tools may have limitations on advanced data workflows.

4. Cost Considerations. Native AWS tools typically have usage-based pricing, while third-party solutions may have subscription-based models that can be more predictable for budgeting.