Are you struggling to keep your workflows running smoothly with PostgreSQL? The right ETL tools can remove the headache of moving and transforming data between systems, letting businesses focus on their growth.

If your company values customization and control, open-source tools are perfect for hands-on developers. However, premium platforms can streamline the integration and save time if you’re looking for something easy to use and packed with advanced features.

This guide will explore the top ETL platforms for PostgreSQL, highlighting their unique strengths and the types of businesses that can benefit most.

Table of Сontents

- 3 Steps of What is ETL

- 3 Steps of What is PostgreSQL

- Integrating Postgres with ETL Tools: Key Benefits

- Open Source ETL Tools for PostgreSQL

- Paid PostgreSQL ETL Tools

- Finding Your Ideal Postgres ETL Tool

- Optimizing Workflows with PostgreSQL ETL

- Conclusion

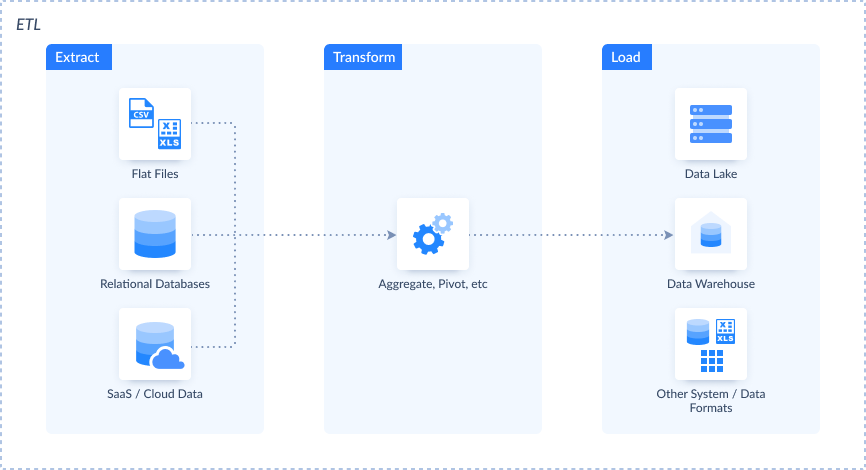

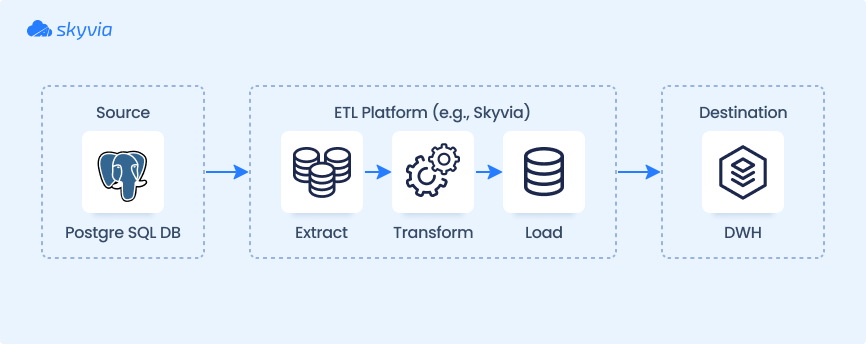

3 Steps of What is ETL

ETL is the backbone of how data moves and gets organized digitally. Think of it as a three-step process:

- Extract data from different sources (like databases, APIs, or spreadsheets).

- Transform it (cleaning, formatting, or reshaping it to fit your needs).

- Load it into a target system, like a database or analytics tool, where it’s ready to be used.

ETL helps businesses turn messy, scattered data into something valuable and actionable. Whether syncing sales numbers, cleaning customer records, or preparing reports, it ensures the correct information is in the right place at the right time.

3 Steps of What is PostgreSQL

PostgreSQL is a powerful, open-source relational database management system known for its flexibility and reliability. Whether you’re managing a small app or running massive enterprise systems, such a tool can easily handle these requests.

- Supports complex queries.

- Handles tons of information.

- Lets you create custom data types.

Plus, it has advanced features like JSON support, full-text search, and robust security. Developers love its versatility; businesses trust it to keep their datasets organized and accessible.

Integrating Postgres with ETL Tools: Key Benefits

When paired with ETL tools, PostgreSQL unlocks powerful opportunities to streamline and enhance operational workflows. This combination allows businesses to automate processes, ensuring seamless integration with various platforms and applications. PostgreSQL is a central hub for managing diverse tasks, transforming raw inputs into structured, actionable insights.

Key Benefits

- Automated Workflows. Eliminate manual intervention by streamlining extraction, transformation, and loading processes.

- Consistency Across Systems. Maintain accurate, synchronized information across platforms and applications, avoiding human errors.

- Custom Transformations. Adapt structures and formats to specific business requirements with flexible mapping capabilities.

- Scalability. Efficiently handle different volumes and increasingly complex operations as your business grows.

- Improved Analytics. Deliver clean, well-organized inputs to analytics tools for better insights and decision-making.

- Cost and Time Savings. Minimize manual effort and reduce operational expenses with automated, repeatable workflows.

- Enhanced Security. Ensure secure transfers and compliance-ready configurations for handling sensitive information.

Open Source ETL Tools for PostgreSQL

If you chose PostgreSQL as the platform, it’s likely because of its open-source nature and cost-effectiveness. Searching with these no-cost options is a practical way to explore powerful abilities without a financial commitment. Such tools offer flexibility and customization, making them a great fit for developers and small businesses.

Let’s kick off our list with free ETL solutions.

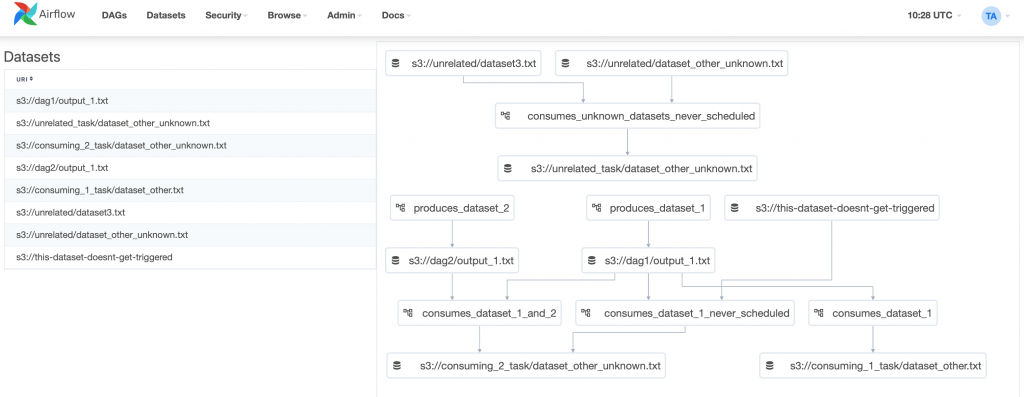

Apache Airflow

Apache Airflow is a platform for creating, scheduling, and monitoring batch processes. Its modular architecture makes it highly scalable, allowing it to handle even the most complex data pipelines. It relies on a message queue (a mechanism that facilitates asynchronous communication by storing various messages), such as requests, responses, errors, or general information. These messages remain in the queue until they are processed by a designated consumer, ensuring smooth coordination across distributed systems.

With Apache Airflow, you can use Python to define processes and outline data extraction, transformation, and loading steps. Here, the power of ETL truly comes into play, enabling efficient and reliable data workflows.

Rating

G2 Crowd 4.3/5 (based on 87 reviews)

Pros

- A very extensible, plug-and-play platform.

- It can process thousands of concurrent workflows, according to Adobe.

- It can also be used for reverse ETL and other data-processing tasks.

- 75+ provider packages to integrate with third-party providers.

- Uses a web user interface to monitor workflows.

Cons

- Requires Python coding skills to create pipelines.

- There’s a steep learning curve if you are transitioning from a GUI tool to this setup.

- The community is still small but growing.

- Uses a command-line interface to install this product.

Apache Kafka

Apache Kafka is a distributed platform designed for real-time data streaming. It captures information from various sources, such as databases, sensors, mobile devices, and applications, and then directs it to various destinations.

Kafka operates with producers that write data to the system and consumers that read and process it. It also offers an API compatible with multiple programming languages, enabling integration with tools like Python and Go or database libraries such as ODBC and JDBC. This approach makes the platform a versatile option for working with PostgreSQL as both a source and a target for data.

Additionally, GUI tools are available here and here to simplify management and monitoring. For those who enjoy coding and scripting, It’s an excellent choice for PostgreSQL ETL processes.

Below is an example of a console script for writing data:

$ bin/kafka-console-producer.sh --topic quickstart-events --bootstrap-server localhost:9092

This is my first event

This is my second eventAnd here’s a sample script for reading events:

$ bin/kafka-console-consumer.sh --topic quickstart-events --from-beginning --bootstrap-server localhost:9092

This is my first event

This is my second eventRating

G2 Crowd 4.5/5 (based on 121 reviews)

Pros

- 80% of all Fortune 100 companies trust and use Kafka.

- Handle millions of messages per second.

- Very fast and highly scalable, with a latency of up to 2 ms.

- High availability and fault-tolerant.

- Large community for support.

Cons

- Requires technical skills to create producers and consumers.

- Like Apache Airflow, there’s a steep learning curve if you transition from a GUI ETL tool to this tool.

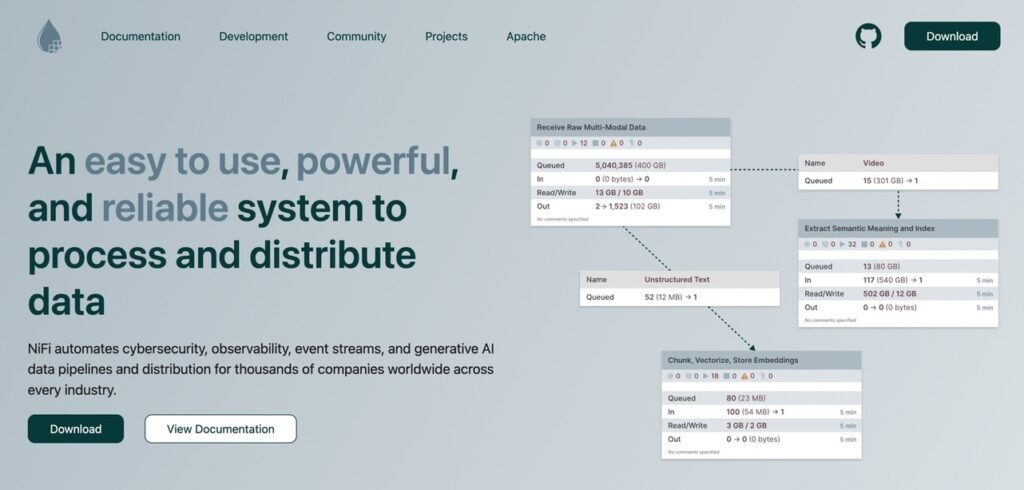

Apache Nifi

If you want a free, open-source ETL with a GUI tool, Apache NiFi is the best bet. It boasts no-code data routing, transformation, and system mediation logic. The user-friendly platform allows even non-developers to create complex workflows easily.

NiFi supports various data sources and destinations, offering flexibility for different integration needs. Its drag-and-drop interface streamlines the process of building and managing pipelines, saving time and reducing the risk of errors. So, if you hate coding and scripting but still need powerful ETL capabilities, this is the perfect open-source solution.

Rating

G2 Crowd 4.2/5 (based on 24 reviews)

Pros

- Supports a wide variety of sources and targets, including PostgreSQL.

- No coding of complex transformations.

- Uses a drag-and-drop designer for your pipelines.

- It also has templates for the most used data flow.

- It can execute large jobs with multithreading.

- You can also use data splitting to reduce processing time.

- Supports data masking of sensitive data.

- Supports encrypted communication.

- You can use Nifi chat, Slack, and IRC for support.

Cons

- The user community is small but growing.

HPCC Systems

HPCC Systems uses a lightweight core architecture for high-speed data engineering, making it an excellent choice for efficiently working with large volumes of information. It offers a secure and scalable platform, ensuring integrity and protection while managing complex workflows. With its unique Enterprise Control Language (ECL), users can perform powerful transformations and analytics with minimal coding effort.

The platform also includes integrated components for ingestion, processing, and querying, simplifying the entire pipeline. This blend of performance, simplicity, and security makes it a versatile solution for businesses looking to streamline their information management processes.

Rating

No review details are available.

Pros

- Secure but near real-time data engineering.

- Uses the Enterprise Control Language (ECL) designed for huge data projects.

- Provides a wide range of machine learning algorithms accessible through ECL.

- Provides documentation and training to develop pipelines.

Cons

- Must learn a new language (ECL) to design pipelines.

Singer.io

Singer.io is an open-source framework for building simple, flexible, scalable data pipelines. It follows a straightforward “tap and target” architecture, where taps extract information from sources, and targets load it into destinations.

The platform uses a standard JSON-based format for data exchange, ensuring compatibility across various systems and applications.

One of its standout features is the ability to handle diverse sources, including APIs, databases, and flat files, making it suitable for various ETL needs.

The system also supports incremental extraction, allowing only new or updated records to be processed, which improves efficiency and reduces resource consumption. Developers appreciate its simplicity and extensibility, as they can create custom taps or targets in Python to meet specific requirements.

Here’s a sample Singer ETL script:

› pip install target-csv tap-exchangeratesapi

› tap-exchangeratesapi | target-csv

INFO Replicating the latest exchange rate data from exchangeratesapi.io

INFO Tap exiting normally

› cat exchange_rate.csv

AUD,BGN,BRL,CAD,CHF,CNY,CZK,DKK,GBP,HKD,HRK,HUF,IDR,ILS,INR,JPY,KRW,MXN,MYR,NOK,NZD,PHP,PLN,RON,RUB,SEK,SGD,THB,TRY,ZAR,EUR,USD,date

1.3023,1.8435,3.0889,1.3109,1.0038,6.869,25.47,7.0076,0.79652,7.7614,7.0011,290.88,13317.0,3.6988,66.608,112.21,1129.4,19.694,4.4405,8.3292,1.3867,50.198,4.0632,4.2577,58.105,8.9724,1.4037,34.882,3.581,12.915,0.9426,1.0,2017-02-24T00:00:00ZRating

No review details are available.

Pros

- 100+ sources and targets, including PostgreSQL.

- JSON-based for language-neutral app communication.

- Develop your own source and your own targets using Python.

- Uses scripts to process data.

Cons

- Requires technical skills to develop pipelines.

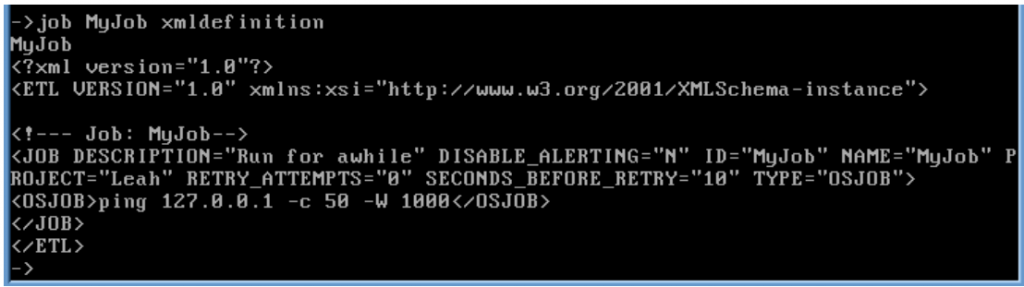

KETL

KETL is a powerful open-source ETL platform for high-performance data integration and processing. It is tailored to handle complex workflows, offering a scalable and robust architecture supporting enterprise-level operations. One of KETL’s key features is its ability to manage large-scale transformations efficiently, making it suitable for businesses with substantial data volumes.

The platform provides built-in support for scheduling, monitoring, and logging, ensuring seamless operation and easier management of ETL processes.

It also includes many prebuilt connectors for databases, flat files, and other data sources, allowing for flexible and straightforward integration. Its modular design enables users to extend its capabilities with custom plugins, making it adaptable to unique business needs.

Here’s a sample KETL command to show the XML definition of MyJob:

Rating

No review details are available.

Pros

- Extract and load data that supports JDBC. This includes flat files and relational databases like PostgreSQL.

- Manage the most complex data in minimal time.

- Integrates with security tools to keep your data safe.

Cons

- Hard to find ETL samples and documentation you can study.

- You may get confused with documentation from similar ETL tools named KETL (like Kogenta ETL (KETL) and configurable ETL (kETL)).

Talend Open Studio

Note: As of January 31, 2024, Talend has discontinued support for Talend Open Studio. Here are the top alternatives for 2025.

However, the platform is still powerful, free, and useful for data integration, transformation, and migration. Its easy-to-use drag-and-drop interface is perfect for anyone without a strong coding background. The platform supports various systems, seamlessly connecting to databases, APIs, and cloud services.

Its flexible design allows users to tackle anything from basic data tasks to more complex, multi-step workflows.

As an open-source tool, it also allows developers to customize and enhance its features, making it a highly adaptable solution for businesses with unique challenges.

Rating

G2 Crowd 4.3/5 (based on 46 reviews)

Pros

- 900+ data integration components. Includes connectors, data transformation, and many more.

- Graphical development of pipelines.

- Extend features with Java programming language.

- Great for small to large datasets.

Cons

- No scheduler for ETL pipelines.

- The app is CPU and memory-intensive, based on some user reviews.

Paid PostgreSQL ETL Tools

Now that we’ve found the free solutions, let’s come close to the paid platforms that provide a lot of value, like ease of use, great support, and more.

Skyvia

Skyvia is a user-friendly, cloud-based ETL tool that makes working with PostgreSQL a breeze. Whether syncing data, importing files, or building complex integrations, its no-code interface ensures you can get the job done without being a tech wizard. The platform supports 200+ different sources, connecting PostgreSQL to everything from CRMs to cloud storage services.

What really sets it apart is the scheduling of recurring imports, synchronizing information between platforms, and even setting up workflows to keep everything running smoothly. The solution also offers powerful transformation abilities to clean and reshape data before it lands in the DBMS.

Rating

G2 Crowd 4.8/5 (based on 242 reviews)

Pros

- Free plans and trials.

- Simple, drag-and-drop designer.

- Simple and complex data transformations.

- With a pipeline scheduler.

- Detailed logs and failure alerts that you can easily comprehend.

- Almost zero code querying of data sources.

- 100% cloud. No need to install it.

Cons

- Limits on free usage.

Pricing

- Flexible freemium model.

- Free plan for 10k records/month.

- Starts at $79/month for 1MB.

Talend Cloud

Features you love in the Open Studio but for the cloud. Talend Cloud is a powerful, cloud-based ETL tool that takes the complexity out of managing PostgreSQL integrations. It handles everything from simple data migrations to advanced workflows, all with a user-friendly interface that combines drag-and-drop simplicity with robust functionality. The solution supports 1000 sources, making connecting PostgreSQL with CRMs, ERPs, and cloud platforms easier.

One of its standout features is its real-time data integration abilities, ensuring your systems are always up-to-date. With built-in data transformation tools, you can clean, format, and prepare the information without additional software. Plus, its automation features let users schedule tasks, freeing up time and reducing manual effort.

Rating

G2 Crowd 4.2/5 (based on 206 reviews)

Pros

- Easy and fast browser-based graphical pipeline designer.

- Extend or customize your pipeline using Python.

- Talend Trust Score checks data reliability at a glance, over time, or at any point in time.

Cons

- Pricey, according to Gartner reviews.

Pricing

It provides a wide range of plans. Contact Talend Sales for pricing.

Informatica PowerCenter

Informatica PowerCenter is a robust solution designed to handle even the most complex integration workflows. It’s an excellent choice for businesses utilizing PostgreSQL and other databases, offering advanced features to extract, transform, and load information across diverse systems. Its scalability ensures it can accommodate large datasets and complex operations, making it a preferred option for enterprise-level organizations.

Its vast library of prebuilt connectors truly sets it apart, enabling seamless integration with various platforms, including databases and cloud-based applications. Additionally, it provides powerful transformation options to ensure your information is accurate, consistent, and ready for analysis. With automation capabilities, users can easily schedule repetitive processes, reducing manual work and enhancing overall efficiency.

Rating

G2 Crowd 4.4/5 (based on 85 reviews)

Pros

- Build formulas for data transformation instead of coding.

- Drag-and-drop designer and configuration with keyboard shortcuts.

- Uses parallel data processing to handle huge amounts of data.

- Granular access privileges and flexible permission management for security.

- 24/7 support is available.

Cons

- Runtime logs are a bit challenging to read.

- Transitioning ETL experts need to familiarize the product’s terminologies.

- Some user reviews in G2 report the unresponsiveness of this app.

Pricing

- Prepaid subscription based on Informatica Processing Unit (IPU).

- Contact sales for more details about IPUs.

Stitch

Stitch is a lightweight and easy-to-use ETL tool for modern data integration. It’s perfect for businesses working with PostgreSQL and other databases, offering a straightforward way to extract, transform, and load the information across platforms. It handles small datasets or large-scale data pipelines with ease.

The solution stands out because of its vast selection of prebuilt integrations, connecting PostgreSQL to hundreds of popular apps, cloud platforms, and DWHs. Its simplicity provides essential transformation options to ensure the data is ready for analysis.

Rating

G2 Crowd 4.4/5 (based on 68 reviews)

Pros

- Simple interface to quickly create pipelines.

- Enterprise-grade security and data compliance.

- Extensible platform with the Singer open-source framework.

Cons

- No free version.

Pricing

- Standard pricing starts at $100/month with 1 destination and 10 sources.

Hevo

Hevo is a no-code ETL tool that simplifies integration and makes working with PostgreSQL and other platforms effortlessly. It provides a seamless way to extract, transform, and load data in real time, making it an excellent choice for businesses that value speed and efficiency. With its scalable architecture, Hevo can handle everything from small datasets to enterprise-level workflows.

What sets Hevo apart is its fully automated pipeline setup, enabling users to connect PostgreSQL with various sources without coding. Its transformation abilities ensure your data is clean, consistent, and analysis-ready. It also offers robust scheduling and monitoring features, so data syncs happen on time without manual intervention.

Rating

G2 Crowd 4.4/5 (based on 254 reviews)

Pros

- 150+ connectors, where 50 are free.

- Flexible transformation using Python.

- Single-row testing before deployment.

- Easy-to-use forms with a schema mapper and keyboard shortcuts.

- Process huge amounts of data with horizontal scaling.

- 24/7 Support.

- Provides resource guides and video tutorials.

Cons

- PostgreSQL is not included in the free connectors.

- Not allowed registration: personal email addresses (Outlook, Gmail) and .edu addresses.

- Requires knowing Python to do transformations.

- No drag-and-drop designer for pipelines.

Pricing

- The pricing is flexible and provides a 14-day free trial.

Integrate.io

Integrate.io is a cloud-based ETL tool that especially fits businesses using PostgreSQL and other platforms. It offers a no-code interface, allowing users to extract, transform, and load data effortlessly for technical and non-technical teams. Its scalability ensures it can handle small tasks and complex enterprise-level workflows.

Its vast library of prebuilt connectors enables seamless integration between PostgreSQL and various cloud applications, databases, and data warehouses. Its robust transformation tools help ensure that your data is accurate, consistent, and ready for analysis. With automation features like scheduled workflows and real-time monitoring, the solution minimizes manual effort while maximizing efficiency.

Rating

G2 Crowd 4.3/5 (based on 201 reviews)

Pros

- Powerful, code-free data transformation.

- Connects to major databases, including PostgreSQL, and other sources.

- ETL, reverse ETL, ELT, CDC, and REST API.

- UI is easy and applicable for beginners and experts alike.

Cons

- No on-premise solution.

- No real-time data synchronization capabilities.

- Does not support pure data replication use cases.

- Business emails only to get started.

- Limited connectors.

Pricing

- The pricing is based on the number of connectors.

- A 14-day trial is available.

Pentaho Data Integration

Pentaho Data Integration (PDI) helps businesses seeking to simplify the integration process, especially with platforms like PostgreSQL. It offers a rich set of ETL features for handling tasks of any complexity, from small projects to large-scale enterprise workflows. Its scalable and flexible architecture allows businesses to manage growing data needs efficiently.

One of PDI’s standout features is its intuitive drag-and-drop interface, which lets users build pipelines without extensive coding knowledge. It also provides advanced transformation abilities to easily clean, format, and prepare data for analysis.

Rating

G2 Crowd 4.3/5 (based on 15 reviews)

Pros

- Codeless pipeline development.

- Supports streaming data.

- Wide range of connectors.

- Enterprise-scale load balancing & scheduling.

- Use R, Python, Scala, and Weka for machine learning models.

- Uses Pentaho security or advanced security providers.

- 24/7 support.

Cons

- Technical documentation needs improvement.

- Pricey, according to some reviews in Gartner Peer Insights.

- No built-in data masking for sensitive data. But a scripting transformation is possible.

Pricing

- Try for free the Pentaho Community Edition.

- 30-day free trial of Pentaho Enterprise Edition.

- $100/user/month to process 5 million rows. You can also adjust your plan as you grow.

Finding Your Ideal Postgres ETL Tool

Choosing the perfect ETL solution for PostgreSQL boils down to what you need most. Whether it’s handling massive data loads, staying within a budget, or working with the team’s technical skills. Look for a tool that:

- Scales with your growth;

- Integrates seamlessly with the data sources;

- Doesn’t make your head spin during setup.

If you’re part of a small team, open-source platforms can do the job without breaking the bank. On the other hand, bigger enterprises might lean toward paid solutions packed with advanced features for high-performance workflows. The key is finding a tool that aligns with your goals so you can streamline data integration and unleash the full power of PostgreSQL.

Optimizing Workflows with PostgreSQL ETL

Imagine you’ve found the tool of your dreams. The next step is data optimization, so let’s begin.

- Define Clear ETL Processes. Start by outlining data extraction, transformation, and loading requirements. Define specific tasks like data cleaning, validation, and enrichment to maintain data consistency and accuracy.

- Leverage PostgreSQL Extensions. Use extensions like PostgreSQL-FDW for handling external data and pg_partman for efficient table partitioning.

- Automate ETL Workflows. Select automation platforms like Skyvia or Apache Airflow to streamline repetitive tasks, reducing manual intervention and errors.

- Optimize Query Performance. Implement indexing, parallel processing, and query optimization techniques to enhance speed and scalability.

- Monitor ETL Pipelines. Use tools to monitor pipeline performance, identify bottlenecks, and ensure real-time data accuracy.

Conclusion

You must balance usability, performance, security, and price in choosing from this list of PostgreSQL ETL tools. Whether you’re new to data integration or an established ETL expert on another product, this list will help you choose what’s good for your use case. If you want a free tool to try with a really good, easy-to-use interface, try out Skyvia’s free plan before starting with the paid versions. And integrate your on-premise or cloud PostgreSQL database easily with other online services.

FAQ for ETL Tools for PostgreSQL

Why do we need to integrate PostgreSQL with ETL tools?

Integrating PostgreSQL with ETL tools simplifies data extraction, transformation, and loading from multiple sources. ETL tools enhance automation, reduce manual errors, and improve the efficiency of managing complex data workflows.

Can PostgreSQL be used for data warehousing needs?

Yes, PostgreSQL is often used for data warehousing due to its robust querying capabilities, scalability, and support for large datasets. Features like table partitioning and indexing make it suitable for analytical workloads.

What are some key extensions for PostgreSQL ETL workflows?

Popular extensions include PostgreSQL-FDW for integrating external data sources, pg_partman for managing partitions, and pg_stat_statements for monitoring query performance during ETL operations.

What are the best ETL tools for PostgreSQL?

Top ETL tools for PostgreSQL include Skyvia, Apache Airflow, Talend, Hevo, Integrate.io, etc., for PostgreSQL. Each offers unique features like automation, cloud integration, and user-friendly interfaces.