Analytics drives modern business. It’s the lighthouse that keeps you from drifting aimlessly in the dark. Business intelligence (BI) takes it further – offering a full view of what’s happening now and even a glimpse into what’s coming next. At its best, it feels almost like magic.

But this magic runs on one thing: data. And collecting that from dozens of different systems – each with its own quirks, formats, and interfaces – could be a serious problem of itself, let alone unifying and bringing that data into accordance. Data integration successfully addresses these challenges by providing a single and unified source for analysis.

In this article, we’ll break down common data integration approaches for BI, compare their strengths and trade-offs, and help you choose the strategy that fits your business – without hacks or messy workarounds.

Table of Contents

- Why Data Integration is Non-Negotiable for Modern BI

- Core Approaches to Data Integration for BI

- Comparison of Data Integration Approaches

- How to Choose the Right Data Integration Strategy

- Putting It Into Practice: The Role of Integration Platforms

- Conclusion

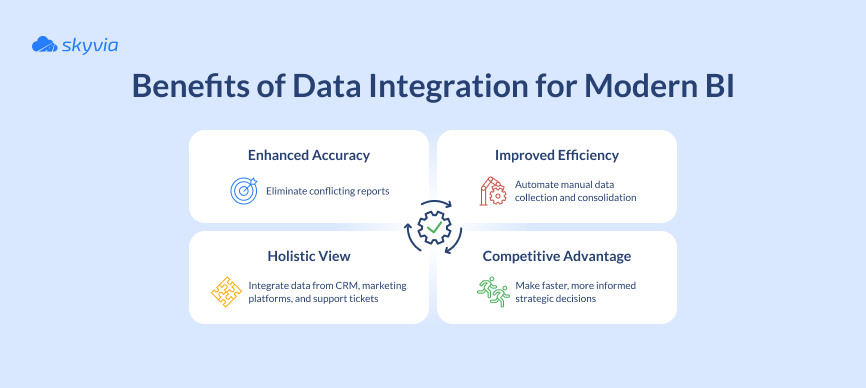

Why Data Integration is Non-Negotiable for Modern BI

Yes, you read that right – not a NICE-to-have, but a MUST-have. Why? First, because modern BI tools thrive on structured data. Although they do accept unstructured input – such as documents, images, emails, videos or social media posts – these sources cannot be plugged in directly the way you would connect a database or an API-ready system.

Typically, such content is stored in a data lake, while its metadata – cleaned, structured, and organized – sits in a data warehouse, where BI tools can query it.

Second, connecting multiple sources directly – especially live ones – makes your ETL/ELT pipelines more complex and slows refresh times. Every extra join and transformation adds latency, and if sources aren’t optimized, queries can crawl.

While there’s technically no hard limit on how many sources you can connect, most companies prefer to standardize their data first, merging it into one curated model before exposing it to BI. The most effective way to do that is to stage data in a high-performance warehouse like Snowflake, BigQuery, or Redshift.

Core Approaches to Data Integration for BI

The five integration methods outlined below are a bit like superheroes in a Hollywood movie – each with its own unique powers. There’s no “one best” approach: each one is second to none in its own arena. The trick is choosing the one that matches your data, your team, and your goals.

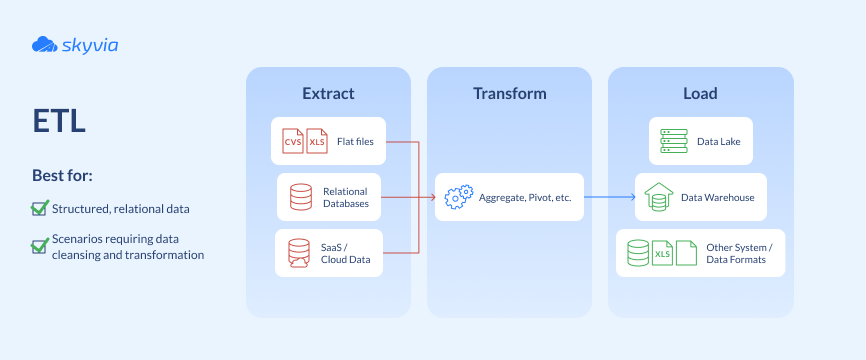

1. ETL (Extract, Transform, Load): The Traditional Workhorse

There’s no shortage of ETL content out there – even on this blog – and for good reason. It’s the primary (or at least earliest widely adopted) method of working with data in an integrated way. Its roots stretch back to the 1960s, when businesses were running early batch jobs on mainframes – essentially doing ETL without calling it that.

The process crystallized in the 1980s with the rise of data warehousing, and by the mid-1990s it had an official toolkit: specialized ETL software. The 2000s were its golden age, as it became the industry standard for building BI systems.

And here’s the thing – it’s still everywhere today. Sure, it now shares the stage with its “cloud cousin” ELT, but ETL hasn’t gone away. It may not wear a cutting-edge badge or come wrapped in trendy buzzwords, but it is still a king in its own domain. Especially where predictable, structured, and clean matter more than flashy and instant.

And if you want to picture what ETL really feels like in practice, think of it as quilting for data. You’ve got scraps from everywhere – messy, mismatched data from a dozen different places, a motley crew of sizes, colors, patterns, and textures. With ETL, you can turn it into something that actually fits together – structured, consistent, and maybe even beautiful.

Here’s how the stitching works:

- Extract. Gather your scraps. Spreadsheets, API feeds, SQL tables, log files — whatever you can get your hands on. They don’t match, and that’s fine. The goal is to get them all in the same basket.

- Transform. Most of the creative problem-solving happens here. In this stage you trim off weird edges, fix inconsistencies, match up colors, and decide how they’ll all fit together. In other words – this is where your data gets cleaned, formatted, and enriched before storage.

- Load. Finally, you sew it all into the quilt — your data warehouse, database, or wherever it’s going to live. If you’ve done your work right, the pattern makes sense, the pieces line up, and the end result is something you can actually use – whether it’s to keep you warm or drive a dashboard.

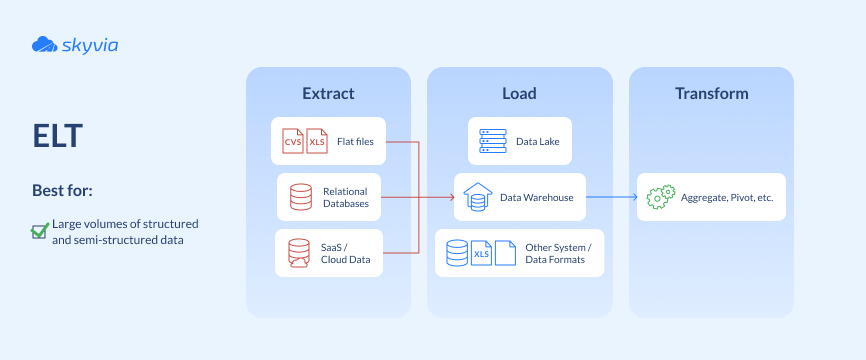

2. ELT (Extract, Load, Transform): The Modern, Cloud-First Approach

ELT may feel like a newcomer, but its roots go back to the same places ETL came from – it just took a different path to the spotlight. For decades, “load first, transform later” wasn’t practical: since compute power was limited, doing heavy transformations inside the warehouse was a luxury few could afford.

That changed in the 2010s with the rise of cloud data warehouses like Snowflake, BigQuery, and Amazon Redshift. Suddenly, you could dump raw data straight into an elastic, scalable environment and transform it on demand. That’s ELT – a modern approach for teams who value flexibility, speed, and have sufficient resources to handle the workload.

Imagine moving house — but instead of sorting, cleaning, and neatly boxing your stuff before the move, you just shovel everything into the truck and dump it straight into the new place. Then, once you’re there (your data warehouse), you unpack, organize, and decide what goes where.

This is exactly what ELT lets you do with your data. The loading is quick because data goes straight into the target system, and the transformations happen afterward using powerful cloud processing engines. The flip side of the coin are costs: storing and querying all that raw data can make them skyrocketing.

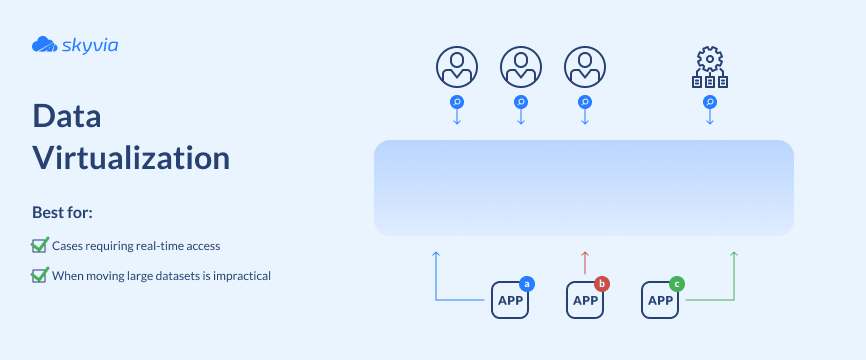

3. Data Virtualization: Integration Without Moving Data

Virtualization as a data integration approach appeared in the late 2000s. It gained traction in the 2010s with mass cloud adoption, API proliferation, and the emergence of big data platforms.

Today, it’s the go-to when speed to insight matters more than building and maintaining a permanent integrated copy – think ad hoc analysis, quick prototypes, or querying data that changes too fast to replicate.

Virtualization platforms connect to each source and build a semantic layer – essentially a virtual metadata model describing what’s in each source, how it’s structured, and how it maps to a unified schema. When a BI tool or analyst runs a query, the virtualization engine:

- Figures out where the required data lives.

- Translates the request and sends optimized sub-queries to each source.

- Retrieves the partial results, joins and transforms them (either at the source or in memory), and returns a single, integrated dataset to the user.

In simple terms, data virtualization can be compared to borrowing books from the library. Instead of making copies of every book you need, you just visit the library and read them where they are.

The virtualization platform is your “card catalog” – it tells you exactly where to look, fetches just the pages you need, and hands them to you on demand. Fast to set up, but if the library’s internet goes down, your reading session is over.

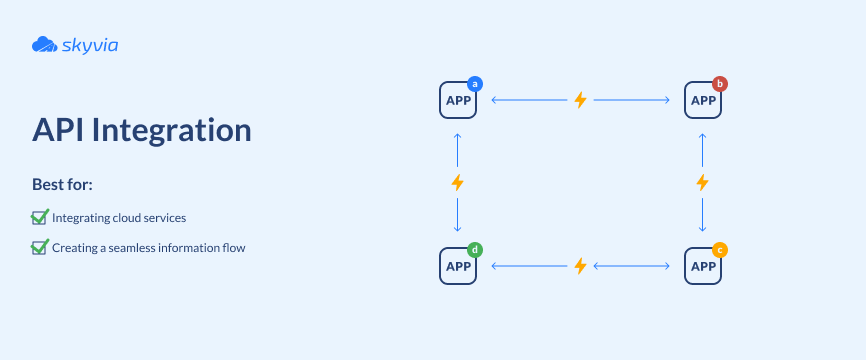

4. API & Application-Based Integration: Connecting Your Tools

The idea of computers talking directly to each other is almost as old as computing itself, so the origins of APIs can be traced back to the 1950s. Over the decades, they’ve evolved from low-level programming interfaces into the modern web APIs that run on HTTP and exchange data in formats like XML and JSON – the technology that powers modern applications and services, especially in distributed systems.

Its quantum leap happened in the early 2000s, when Roy Fielding and his colleagues formalized the principles of REST – a standardized method of communication between the client and server.

Suddenly, systems were able to connect with each other regardless of their runtime and the programming language they were written in.

It also brought the development process to a whole new level, enabling developers to extend their code functionality by calling API methods from a library instead of writing the logic themselves.

APIs are like interpreters at a big international conference. Every system speaks its own language, and the API translates in real time so everyone understands each other.

You don’t need to store the translations in a giant binder – you just ask the interpreter whenever you need something. But if the interpreter’s busy or the connection’s bad, the conversation slows down.

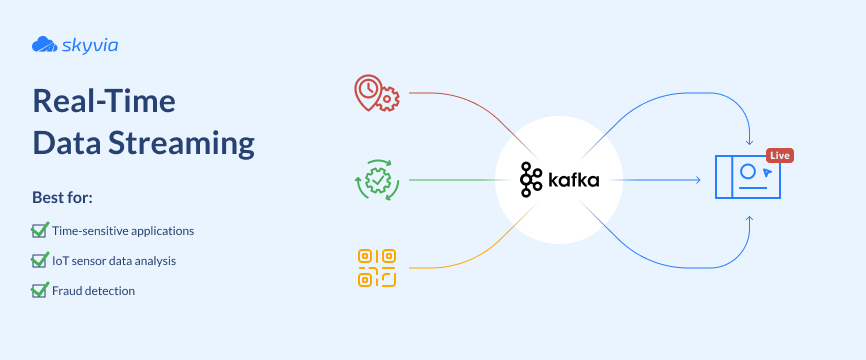

5. Real-Time Data Streaming: For Instant Insights

And finally, real-time streaming is one of the most technically complex integration approaches. Born in the mid-1990s, it has evolved from niche, expensive systems in finance and telecom into a mainstream technology powering fraud detection, stock trading, IoT and sensor data, customer engagement, and more.

In streaming, there’s no room for error and no option to “retry later,” because data is continuously produced, transmitted, and consumed. BI streaming pipelines must deliver updates in milliseconds to seconds.

And since many operations depend on context, streaming platforms also have to maintain state – a memory of what has already happened. That’s how they can detect unusual login attempts within a time window or track the running total of transactions per account.

All of this creates extra demands on the architecture, which must ensure:

- Fault tolerance while running.

- Exactly-once delivery (no duplicates or drops).

- Real-time state management.

If batch processing is like reading yesterday’s paper, with streaming, you’re watching the live broadcast. The feed is constant, and your “TV” (BI dashboard) updates the moment something happens. Great for breaking news… but exhausting (and costly) if you don’t actually need to watch every second.

Comparison of Data Integration Approaches

We’ve walked through the five main integration methods in detail. But sometimes it’s easier to see the differences in one place. The table below brings them side by side, comparing their core characteristics at a glance.

| Approach | Latency | Data Volume/Type | Pros | Cons |

|---|---|---|---|---|

| ETL | Minutes to hours (batch) | Structured data, moderate to large volumes | Predictable, clean, structured output; great for historical analysis | Slower refresh; less flexible with unstructured data |

| ELT | Minutes (batch, but faster) | Very large / diverse datasets; raw + structured | Faster setup; scalable with cloud warehouses; keeps raw data for future use | High storage & compute costs; needs powerful warehouse |

| Data Virtualization | Seconds to minutes (query-time) | Mixed data types; distributed sources | No data movement; fast to set up; single “virtual view” | Dependent on source availability; may struggle with huge volumes |

| API & Application-Based Integration | Near real-time (depends on API) | Transactional / app data | Direct app-to-app connectivity; flexible; no need for staging | API limits, downtime, or version changes can break flows |

| Real-Time Data Streaming | Milliseconds to seconds (continuous) | High-velocity event streams (IoT, logs, transactions) | Instant insights; ideal for time-sensitive operations | Technically complex; costly; requires strong engineering skills |

How to Choose the Right Data Integration Strategy

TL;DR – honestly, all the advice here boils down to two things:

- Start with what you already have – your data (structured or unstructured), your team (skills and appetite), and your stack (on-prem, cloud, or hybrid).

- Use common sense – pick the simplest, most cost-effective strategy that’s good enough for your case. Streaming may sound exciting, but if your decisions can wait, it’s probably overkill.

Now, let’s elaborate on these ideas in a bit more detail.

Assess Your Data Sources and Complexity

First, estimate the scope of the chaos. What kind of data are you dealing with? If it sits neatly organized in databases or APIs, that’s one thing. But what if you’ve got unstructured content like documents, images, or IoT streams?

Location matters, too: on-prem, in the cloud, or scattered across both. The more diverse and fragmented your landscape, the more you’ll lean toward abstraction (like virtualization) or centralization (like ETL/ELT).

Consider Your Need for Real-Time Data

Real-time streaming is worth the investment only when instant insights truly matter – fraud detection, IoT telemetry, customer interactions. But if your decisions can wait for updates, streaming just piles unnecessary complexity onto an already complex task.

Evaluate Your Team’s Technical Skills

Technology is all very well, but the real differentiator is the people running it. Choose the one that resonates most with your team’s skills. Do they thrive on coding, debugging, and maintaining pipelines? Or do they breathe a sigh of relief when you mention low-code or no-code tools?

Factor in Scalability and Future Growth

What works for you today may not work when your data doubles or triples. Pick an approach that scales with your business – both in terms of volume and variety of data. Cloud-native methods like ELT and streaming often shine here, while traditional ETL still wins for structured, repeatable, and predictable workloads.

Putting It Into Practice: The Role of Integration Platforms

Understanding the approaches is one thing; implementing them is another. Sure, you can build an integration pipeline by hand – custom-made and perfectly suited for a specific case.

But how will it handle growing volumes of data? Or connect with a tool whose interface isn’t supported? Or be maintained when the genius who wrote it resides without leaving any documentation?

Chances are, the story won’t end well. At some point, you’ll need to switch to something more solid – most likely a commercial tool. That usually means a serious infrastructure upgrade – an elaborate, time-intensive, and costly process, which on top of that distracts you from the real goal: turning data into insight.

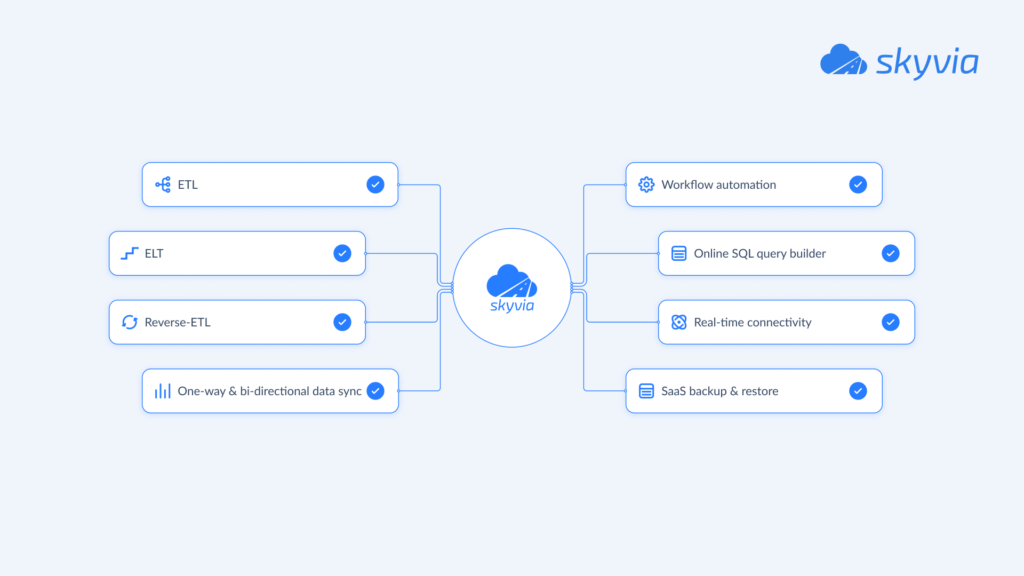

So don’t put the problem on the shelf. If you’re evaluating integration platforms, consider Skyvia as an option. It’s a cloud-based, no-code solution that supports multiple approaches – from ETL pipelines and API-based integration to data replication and synchronization.

This way, it gives you the freedom to choose the method that fits your business without locking yourself into a single pattern.

And the payoffs are well worth it: faster connections between sources, smoother data flows, and more time for your team to focus on analysis rather than infrastructure plumbing.

Conclusion

Although business intelligence may feel like magic, its roots are deep in the real ground: data that’s collected, cleaned, and connected the right way.

The good news is, you have options. From traditional ETL to modern ELT, from virtualization to APIs and streaming – each one has its own distinctive features, time and place.

Pick wisely, and your integration won’t just feed your BI dashboards but light the path ahead for your business.

F.A.Q. for Data Integration in Business Intelligence

What is the key difference between ETL and ELT?

ETL transforms data before loading it into storage; ELT loads raw data first and uses the warehouse’s power to transform afterward.

When is data virtualization a better choice than ETL or ELT?

When you need quick, ad hoc analysis without moving data – ideal for prototypes or querying sources that change too often to replicate.

How does data integration lead to better decision-making?

It eliminates silos, ensures data consistency, and gives BI tools a complete, accurate picture – enabling decisions based on facts, not guesses.

Which data integration approach is best for cloud applications like Salesforce or HubSpot?

API and application-based integration is the go-to, since these platforms expose APIs designed for seamless, near real-time connectivity.